Browse our archives by topic…

Analytics

Medallion Architecture in Excel

Apply the Medallion Architecture to Excel: use the three-tab rule to separate raw data, logic, and output for cleaner, maintainable spreadsheets.

What is Retrieval-Augmented Generation (RAG)?

What is RAG? Learn how RAG combines retrieval, augmentation & generation to ground GenAI responses in your data while reducing hallucinations & improving accuracy.

The Data Product Canvas: The Theory Behind The Canvas

The Data Product Canvas fuses the Business Model Canvas with Data Mesh's 'data as a product' principle, combining visual strategic collaboration with product-minded data ownership.

The Data Product Canvas in Action

See the Data Product Canvas in action with a real-world scenario. Follow along as we work through each building block to design a high-impact, feasible data product for a national garden center chain facing revenue challenges.

The Data Product Canvas: Deep Dive into the Building Blocks

The Data Product Canvas has nine building blocks, best completed right-to-left starting with Audience and Actionable Insight, to keep data products purpose-driven and user-centred.

The Data Product Canvas: Stop Building Data Products That Fail

Turn data initiatives into business success stories with the Data Product Canvas. This practical framework helps teams design data products that deliver real value, avoid common pitfalls, and align with business objectives.

Top Features of Notebooks in Microsoft Fabric

Lakehouse integration, built-in notebook resources, and collaboration features that set Microsoft Fabric notebooks apart from Jupyter and Databricks.

Big Data London 2025

AI agents dominated Big Data LDN 2025, but the real story wasn't the technology, it was which organisations could actually deploy it successfully. After five years tracking industry evolution through this event, one pattern emerged clearly: the winners had built their foundations first. For CTOs making platform decisions now, the strategic imperative isn't choosing between innovation and governance; it's recognizing that governance enables innovation at scale.

Writing structured data to SharePoint from Synapse Notebooks

Write data back to SharePoint from Synapse Notebooks using PySpark, the Microsoft Graph API, and Service Principal auth — Drive IDs, tokens, and upload patterns.

Reading structured data from SharePoint in Synapse Notebooks

This post describes an approach to copy files and data from SharePoint into Azure using Synapse Notebooks.

Reading structured data from SharePoint in Synapse Pipelines

This post describes an approach to copy files and data from SharePoint into Azure using Synapse Pipelines.

Synapse & Service Principal SharePoint Integration

The interactive notebook shared in this post defines the process of granting Service Principals (inc. Synapse managed identities) access to SharePoint sites.

What is the Medallion Architecture?

The Medallion Architecture consists of three data tiers: Bronze (raw), Silver (clean), and Gold (projected). Data moves through these three tiers and becomes more opinionated at each stage.

Learning from disaster: a Titanic Power BI report walkthrough

In Paul Waller's final, and posthumously published blog post, he takes you through a creative walk-through of the Titanic Power BI Report he created with Barry Smart.

How to Build Mobile Navigation in Power BI

This is follow guide to designing a mobile navigation in Power BI, covering form, icons, states, actions, with a view to enhancing report design & UI.

How do Data Lakehouses Work? An Intro to Delta Lake

With new technologies - such as Delta Lake and other open table formats - there have been huge improvements the performance of Data Lakehouses. But what is Delta Lake and how does it work?

What is a Data Lakehouse?

What exactly is a Data Lakehouse? This blog gives a general introduction to their history, functionality, and what they might mean for you!

Creating Quality Gates in the Medallion Architecture with Pandera

This blog explores how to implement robust validation strategies within the medallion architecture using Pandera, helping you catch issues early and maintain clean, trustworthy data.

Power BI: using label encoded vs one-hot encoded data

Understand why label encoding is the preferred technique for encoding categorical data for analysis in Power BI over one-hot encoding.

Encoding categorical data for Power BI: Label vs one-hot

One-hot encoding and label encoding are two methods used to encode categorical data. Understand the specific advantages and disadvantages of these techniques.

Power BI Images That Pop: Intuitive, easy-to-maintain reports

Explore integrating icons, pictograms and images into Power BI in the optimal way to enhance the user experience and minimise effort required to build and maintain reports.

Why Power BI developers should care about TMDL

Power BI's adoption of TMDL improves the readability of the semantic model, enables version control and enhances collaboration and efficiency for developers.

There's something wrong with the Pandas API on Spark

Fix the following issues: Errors converting large datasets to pandas, pandas for Spark is very slow, and pandas for Spark column reduction doesn't reduce data.

Carbon Optimised Data Pipelines: Next Steps

Extending carbon-optimised pipelines: choose between Azure regions at runtime, work around Wait activity limits, and adapt the pattern beyond the UK.

Carbon Optimised Data Pipelines: Pipeline Definition

A portable Data Factory, Synapse, or Fabric pipeline that calls the Carbon Intensity API and waits for the greenest scheduling window — no custom code.

Carbon Optimised Data Pipelines: Architecture Overview

Translating carbon-optimised scheduling into a modern data pipeline architecture for Microsoft Fabric, Azure Synapse and Azure Data Factory.

Carbon Optimised Data Pipelines: Introduction

Cloud data pipelines often have flexibility in when they run. Using the UK National Grid Carbon Intensity API, you can schedule them for the greenest window.

Why Power BI developers should care about PBIR

Power BI's new PBIR format enhances collaboration, version control, and efficiency for developers. Learn key benefits and future implications.

Why Power BI developers should care about Power BI projects (PBIP)

Power BI Projects are a game changer for teams building reports; offering a source-control friendly format, CI/CD support, and the ability to edit in a code editor.

Launchpad to Success: Building and Leading Your Data Team

High-performance data teams start with C-suite sponsorship, align to organisational goals, adopt a product mindset, and position themselves as innovation engines rather than order takers.

Data is a socio-technical endeavour

Our experience shows that the the most successful data projects rely heavily on building a multi-disciplinary team.

Data & AI Engineering Maturity: fix issues before the buffers hit

As data and AI become the engine of business change, we need to learn the lessons of the past to avoid expensive failures.

SQLbits 2024 - The Best Bits

This is a summary of the sessions I attended at SQLbits 2024 - Europe's largest expert led data conference. This year SQLBits was hosted at Farnborough IECC, Hampshire.

How to Build Navigation into Power BI

Explore a step-by-step guide on designing a side nav in Power BI, covering form, icons, states, actions, with a view to enhancing report design & UI.

Accessing multi-select choice labels in Synapse Link for Dataverse

Learn how to access multi-select choice column choice labels from Azure Synapse Link for Dataverse using PySpark or SQL.

How to access choice labels: Azure Synapse Link for Dataverse with SQL

Learn how to access the choice labels from Azure Synapse Link for Dataverse using T-SQL through SQL Serverless and by using Spark SQL in a Synapse Notebook.

Styling and Enhancing Model Driven Apps in Power Apps

Discover a concise guide on improving Model Driven Power Apps styles with step-by-step instructions for a polished user experience.

How to access choice labels: Azure Synapse Link Dataverse with PySpark

PySpark recipe for accessing choice (option set) labels, not just integer values, from Dataverse data exposed via Azure Synapse Link in your Synapse lakehouse.

Notebooks in Azure Databricks

Azure Databricks Notebooks combine live code in Python, SQL, Scala, or R with visualisations and markdown. Here's how to set them up, attach clusters, manage libraries, and integrate them into ADF/Synapse pipelines.

Copilot: Unleashing AI in Self-Service Analytics

Explore AI-powered self-service reporting with tools like Copilot in Power BI and Microsoft Fabric, balancing benefits and pitfalls.

Microsoft Fabric: Announced

Microsoft Fabric unifies Power BI, Data Factory & Data Lake on Synapse infrastructure, reducing cost & time while enabling citizen data science.

What is OneLake?

Explore OneLake, Microsoft Fabric's core storage for data in Azure & other clouds. Discover its role in Fabric workloads, the OneDrive equivalent for data storage.

Intro to Microsoft Fabric

Microsoft Fabric unifies data & analytics, building on Azure Synapse Analytics for improved data-level interoperability. Explore its offerings & pros/cons.

Notebooks in Azure Synapse Analytics

Discover the use of Synapse Notebooks in Azure Synapse Analytics for data analysis, cleaning, visualization, and machine learning.

Version Control in Databricks

Explore how to implement source control in Databricks notebooks, promoting software engineering best practices.

Right questions to get data insights projects back on track

Learn about the thinking behind endjin's Power BI Maturity assessment by applying Wardley Doctrine, and asking more questions.

SQLbits 2023 - The Best Bits

This is a summary of the sessions I attended at SQLbits 2023 in Newport Wales, which is Europe's largest expert led data conference.

Working with JSON in Pyspark

Use PySpark's explode() to flatten deeply nested JSON into tabular DataFrames: preserving cluster parallelism while handling complex document structures.

Data validation in Python: a look into Pandera and Great Expectations

Implement Python data validation with Pandera & Great Expectations in this comparison of their features and use cases.

How to develop an accessible colour palette for Power BI

Explore how we developed an accessible color palette for Power BI reports, considering color vision deficiency and data visualization.

Big Data LDN: highlights and how to survive your first data conference

Highlights from Big Data LDN 2022, covering Data Mesh debates, data quality and creation vs extraction, modern data stack terminology, women in data talks, and tips for surviving your first in-person conference.

Customizing Lake Databases in Azure Synapse Analytics

Explore Custom Objects in Lake Databases for user-friendly column names, calculated columns, and pre-defined queries in Azure Synapse Analytics.

What is a Lake Database in Azure Synapse Analytics?

Explore Lake Databases in Azure Synapse Analytics: analyze Dataverse data, share Spark tables, and design models with Database Templates.

EVALUATEANDLOG in DAX

DAX has never had real debugging capability until EVALUATEANDLOG. This hidden function prints intermediate values from any DAX expression, letting you see exactly how your measures are evaluated.

Insight Discovery Part 6: Defining business requirements for data

Capture actionable insights through workshops, build a delivery backlog, and learn why cloud analytics success ultimately comes down to people, not technology.

Insight Discovery Part 5: Delivering insights via data pipelines

Traditional bottom-up data modelling leads to platforms that are hard to evolve and don't meet business needs. Data pipelines let you deliver thin, focused slices of value from source to insight.

Insight Discovery (part 4) – Data projects should have a backlog

This series focuses on maximizing data projects' impact via an iterative, insight discovery process, and synergy with cloud platforms like Azure Synapse.

Insight Discovery (part 3) – Defining Actionable Insights

Define actionable insights by starting with a specific action, then identifying the questions, evidence and feedback loops that turn data into business value.

Design assets for impactful data storytelling in Power BI

In this post we will talk through how to expand on a data team's creative skillset, without access to specialist photo editing software such as Photoshop or Illustrator.

5 tips to pass the PL-300 exam: Microsoft Power BI Data Analyst

I recently passed the PL-300 - Power BI Data Analyst exam. Here are some tips to prepare for it that I found useful!

Insight Discovery Part 2: data projects start by forgetting the data

Successful data projects start by forgetting about the data. Begin with business goals and the decisions that drive them, and let data follow the insight.

Performance Optimisation Tools for Power BI

Optimise Power BI report performance with analyzer tools. Discover essential techniques for efficient report development in this blog post.

Insight Discovery (part 1) – why do data projects often fail?

Why traditional bottom-up data warehouse projects so often deliver compromised platforms — and how a top-down, action-oriented approach changes the outcome.

Automating Excel in the Cloud with Office Scripts and Power Automate

Automate Excel tasks with Office Scripts & Power Automate. Get an overview and explore a practical example in this post.

Applying Behaviour Driven Development to data and analytics projects

In this blog we demonstrate how the Gherkin specification can be adapted to enable BDD to be applied to data engineering use cases.

Sharing access to Shared Metadata Model objects in Synapse

Learn how to grant non-admin users access to Spark synchronized objects with SQL Serverless in Synapse Analytics using the Shared Metadata Model.

What is the Shared Metadata Model in Azure Synapse, and why use it?

Explore Azure Synapse's 'Shared Metadata Model' feature. Learn how it syncs Spark tables with SQL Serverless, its benefits, and tradeoffs.

Context Transition in DAX

How CALCULATE silently turns a row context into a filter context in DAX — and why context transition prevents surprises in calculated columns and measures.

Excel, data loss, IEEE754, and precision

Explore the impact of Excel's numeric precision rules on identifiers and learn about infamous data loss incidents caused by misuse in this post.

CALCULATE in DAX

CALCULATE is the only DAX function that can change filter context in Power BI. Jess explains how it works, its syntax, and how filter propagation flows.

RELATED and RELATEDTABLE in DAX

How DAX's RELATED and RELATEDTABLE functions navigate one-to-many relationships in Power BI — with a Customer/Sales example showing row-context behaviour.

Extract insights from tag lists using Python Pandas and Power BI

Discover how to extract insights from spreadsheets and CSV files using Pandas and Power BI in this blog post.

Filtering unrelated tables in Power BI

Explore filtering fact tables with Star Schemas and learn a clean method for filtering dimension tables by another dimension.

Dynamically switch Power BI measures with Field Parameters

Power BI's Field Parameters feature lets users toggle between measures in a single visual with no advanced modelling. Here's how to set it up, plus a DAX-based workaround for when you need more control.

Table Functions in DAX: DISTINCT

Explore DAX's DISTINCT table function in Power BI — how it returns unique values of a column in the current filter context, with worked Sales and Product examples.

Table Functions in DAX: FILTER and ALL

FILTER and ALL are the two most common table functions in DAX, controlling how filter context flows through Power BI to drive dynamic measures and calculations.

Measures vs Calculated Columns in DAX and Power BI

Explore DAX & Power BI differences between measures & calculated columns, and learn when to use each in this informative blog post.

SQLbits 2022 - The Best Bits

This is a summary of the sessions I attended at SQLbits 2022 in London, which is Europe's largest expert led data conference.

How to Create Custom Buttons in Power BI

Explore a step-by-step guide on designing custom buttons in Power BI, covering shape, form, icons, states, actions, and enhancing report design & UI.

A visual approach to demand management and prioritisation

Explore a simple, visual approach to prioritisation that aids decision-making and stakeholder engagement.

Evaluation Contexts in DAX - Context Transition

In this third and final part of this series, we learn how CALCULATE performs Context Transition when used inside of a filter context.

Evaluation Contexts in DAX - Relationships

After learning about the two different types of evaluation contexts in our previous post, we now talk about table relationships and how these interact with the filter and row contexts to condition the output of our DAX code.

Testing Power BI Reports with the ExecuteQueries REST API

Explore DAX queries for scenario-based testing in Power BI reports to ensure data model validity, rule adherence, and security maintenance.

How to Build a Branded Power BI Report Theme

Explore translating a company's brand into Power BI reports and extending their visual identity to include the Power BI platform.

Evaluation Contexts in DAX - Filter and Row Contexts

Explore DAX query language in Power BI: learn about Evaluation Contexts and their impact on code for improved formula results.

Why you should care about the Power BI ExecuteQueries API

Explore the benefits of Power BI's new ExecuteQueries REST API for developers in tooling, process, and integrations.

Data is the new soil

Thinking of data as the new soil is useful in highlighting the key elements that enable a successful data and analytics initiative.

Azure Synapse SQL Serverless: connect Data Lake and Power BI

TL;DR - Using Azure Synapse SQL Serverless, you can query Azure Data Lake and populate Power BI reports across multiple workspaces.

How to test Azure Synapse notebooks

Explore data with Azure Synapse's interactive Spark notebooks, integrated with Pipelines & monitoring tools. Learn how to add tests for business rule validation.

Do robots dream of counting sheep?

Some of my thoughts inspired whilst helping out on the farm over the weekend. What is the future of work given the increasing presence of machines in our day to day lives? In which situations can AI deliver greatest value? How can we ease the stress of digital transformation on people who are impacted by it?

Safely reference nullable activity output in Synapse / ADF

Discover Azure Data Factory's null-safe operator for referencing activity outputs that may not always exist. Learn to use it effectively.

How Azure Synapse unifies your development experience

Modern analytics requires a multi-faceted approach, which can cause integration headaches. Azure Synapse's Swiss army knife approach can remove a lot of friction.

How do I know if my data solutions are accurate?

Data insights are useless, and even dangerous (as we've seen recently at Public Health England) if they can't be trusted. So, the need to validate business rules and security boundaries within a data solution is critical. This post argues that if you're doing anything serious with data, then you should be taking this seriously.

Fix "You need permission to access workspace" in Azure Synapse

Fix the "You need permission" error in Azure Synapse Analytics with this guide, addressing its causes and solutions for Data Engineers/Developers.

How to use the Azure CLI to manage access to Synapse Studio

Assign roles in Synapse Studio for Azure Synapse Analytics devs using Azure CLI. Accessible by Owners/Contributors of the resource.

Public Health England Test & Trace Excel error: a one-step fix

Despite the subsequent media reporting, the loss of 16,000 Covid-19 test results at Public Health England wasn't caused by Excel. This post argues that a lack of an appropriate risk and mitigation analysis left the process exposed to human error, which ultimately led to the loss of data and inaccurate reporting. It describes a simple process that could have been applied to prevent the error, and how it will help if you're worried about ensuring quality or reducing risk in your own business, technology or data programmes.

Does Azure Synapse Link redefine the meaning of full stack serverless?

Explore Azure Synapse Link for Cosmos DB's impact on 'full stack serverless', No-ETL, and pay-as-you-query analytics.

SQL Notebooks for Azure Synapse SQL Pools & SQL on demand

Wishing Azure Synapse Analytics had support for SQL notebooks? Fear not, it's easy to take advantage rich interactive notebooks for SQL Pools and SQL on Demand.

Deploy an Azure Synapse Analytics workspace using an ARM Template

Explore deploying Azure Synapse Analytics workspaces using ARM templates, a popular infrastructure deployment method for organizations.

Azure Synapse Analytics: serverless replacing the data warehouse

Serverless data architectures enable leaner data insights and operations. How do you reap the rewards while avoiding the potential pitfalls?

Quick tip – Removing totals from a matrix in Power BI

A quick Power BI tip: remove unwanted column summarisation from a matrix by replacing the column with a SELECTEDVALUE measure — with a worked example.

Quick tip: updating Power BI column sort order without circular refs

Power BI columns sort alphabetically by default — putting 'February' before 'January'. Fix it with a lookup table and a calculated column for custom sort order.

Picking contrasting font colours in Power BI tables

Boost Power BI report readability with dynamic font colors for diverse backgrounds, ensuring clear text display and enhanced accessibility.

Benchmarking Azure Synapse SQL Serverless with Polyglot Notebooks

New Azure Synapse Analytics service offers SQL Serverless for on-demand data lake queries. We tested its potential as a Data Lake Analytics replacement.

Azure Synapse for C# Developers: 5 things you need to know

Did you know that Azure Synapse has great support for .NET and #csharp? Learning new languages is often a barrier to digital transformation, being able to use existing people, skills, tools and engineering disciplines can be a massive advantage.

5 Reasons why Azure Synapse Analytics should be on your roadmap

Explore 5 key reasons to choose Azure Synapse Analytics for your cloud data needs, based on years of experience in driving customer outcomes.

Power BI Embedded: Convention-based dynamic Row-level Security

Explore Power BI Embedded for ISVs, using JavaScript library for personalization, Row-level Security, and modifying Embed Requests for data filtering.

How can I improve my data model in Power BI?

Explore how to configure model properties in Power BI for enhanced discoverability and improved data visualisation support.

Why Power BI developers should care about the read/write XMLA endpoint

Whilst "read/write XMLA endpoint" might seem like a technical mouthful, its addition to Power BI is a significant milestone in the strategy of bringing Power BI and Analysis Services closer together. As well as closing the gap between IT-managed workloads and self-service BI, it presents a number of new opportunities for Power BI developers in terms of tooling, process and integrations. This post highlights some of the key advantages of this new capability and what they mean for the Power BI developer.

Learning DAX and Power BI – CALCULATE

This is the final blog in a series about DAX and Power BI. This post focuses on the CALCULATE function, which is a unique function in DAX. The CALCULATE function has the ability to alter filter contexts, and therefore can be used to enable extremely powerful and complex processing. This post covers some of the most common scenarios for using CALCULATE, and some of the gotchas in the way in which these different features interact!

Testing Power BI Reports using SpecFlow and .NET

Ensure Power BI report quality by connecting to tabular models, executing scenario-based specs, and validating data, business rules, and security.

Data modelling with Power BI - Loading and shaping data

Explore data modelling in Power BI, including loading, shaping, and enhancing data. Learn key steps and practical examples.

Learning DAX and Power BI – Related Tables and Relationships

This is the sixth blog in a series about DAX and Power BI. This post focuses on relationships and related tables. These relationships allow us to build up intricate and powerful models using a combination of sources and tables. The use of relationships in DAX powers many of the features around slicing and page filtering of reports.

Azure Oxford talk: combatting illegal fishing for under £10/month

Jess and Carmel recently gave a talk at Azure Oxford on Combatting illegal fishing with Machine Learning and Azure - for less than £10 / month. The recording of that talk is now available for viewing!The talk focuses on the recent work we completed with OceanMind. They run through how to construct a cloud-first architecture based on serverless and data analytics technologies and explore the important principles and challenges in designing this kind of solution. Finally, we see how the architecture we designed through this process not only provides all the benefits of the cloud (reliability, scalability, security), but because of the pay-as-you-go compute model, has a compute cost that we could barely believe!

Testing Power BI Dataflows using SpecFlow and the Common Data Model

Ensure reliable insights with endjin's automated quality gates for validating Power BI Dataflows in complex solutions.

Learning DAX and Power BI – Table Functions

This is the fifth blog in a series on DAX and Power BI. This post focuses on table functions. In DAX, table functions return a table which can then be used for future processing. This can be useful if, for example, you want to perform an operation over a filtered dataset. Table functions, like most functions in DAX, operate under the filter context in which they are applied.

Azure Analysis Services - how to save money with automatic shutdown

Explore Azure Analysis Services for scalable analytics. Control costs via automation with Powershell & Azure DevOps in multi-environment setups.

Learning DAX and Power BI - Aggregators

This is the fourth blog in a series about DAX and Power BI. We have so far covered filter and row contexts, and the difference between calculated columns and measures. This post focuses on aggregators. We cover the limitations of the classic aggregators, and demonstrate the power of the iterative versions. We also highlight some of the less intuitive features around how these functions interact with both filter and row contexts.

Power BI Dataflow refresh polling

There's no obvious API endpoint for Power BI Dataflow refresh history, unlike Datasets. Here's how to get it programmatically.

Azure Analysis Services: update calculated column expression from .NET

Learn how to update Azure Analysis Services model schemas in .NET apps using AMO SDK for user-driven analysis.

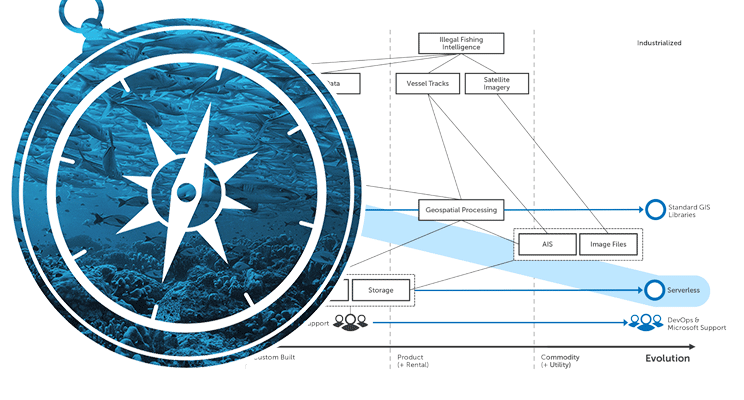

Wardley Maps: How OceanMind use Azure & AI to fight illegal fishing

Wardley Maps are a fantastic tool to help provide situational awareness, in order to help you make better decisions. We use Wardley Maps to help our customers think about the various benefits and trade-offs that can be made when migrating to the Cloud. In this blog post, Jess Panni demonstrates how we used Wardley Maps to plan the migration of OceanMind to Microsoft Azure, and how the maps highlighted where the core value of their platform was, and how PaaS and Serverless services offered the most value for money for the organisation.

Learning DAX and Power BI – Calculated Columns and Measures

Dive into DAX and Power BI in our blog. Learn about calculated columns, measures, and their use in complex visuals.

Optimising C# for a serverless environment

Tour our OceanMind project using Azure Functions for real-time marine telemetry processing. Learn optimization techniques for serverless environments.

Learning DAX and Power BI – Row Contexts

Here is the second blog in a series around learning DAX and Power BI. This post focuses on row contexts, which are used when iterating over the rows of a table when, for example, evaluating a calculated column. Row contexts along with filter contexts underpin the basis of the DAX language. Once you understand this underlying theory it is purely a case of learning the syntax for the different operations which are built on top of it.

Azure Analysis Services: Process an async model refresh from .NET

Learn to use Azure Analysis Services in custom apps via REST API in .NET for efficient async model refreshes.

Learning DAX and Power BI - Filter Contexts

Every DAX formula runs inside a filter context - the set of rows it can see. Row selection, column selection, slicers, and report filters all shape that context and change your results.

Azure Analysis Services: How to execute a DAX query from .NET

Explore endless possibilities with dynamic DAX queries in C# for Azure Analysis Services integration in custom apps using the provided code samples.

British Science Week: inspiring the next generation of data scientists

The theme of this year's British Science Week (6 - 15 March 2020) is "Our Diverse Planet". We'll be getting involved by speaking to school children about the work we've been doing with Oxfordshire-based OceanMind (part of the Microsoft AI for Good programme) to help them combat illegal fishing, hopefully inspiring some of the next generation of data scientists!

Azure Analysis Services: query all measures in a model from .NET

Explore .NET querying methods for integrating Azure Analysis Services beyond data querying into dynamic UIs and APIs.

Azure Analysis Services: How to open a connection from .NET

Learn to integrate Azure Analysis Services in apps by establishing server connections. Follow this guide with code samples for essential scenarios.

NDC London 2020 - My highlights

Ed attended NDC London 2020, along with many of his endjin colleagues. In this post he summarises and reflects upon his favourite sessions of the conference including; "OWASP Top Ten proactive controls" by Jim Manico, "There's an Impostor in this room!" by Angharad Edwards, "How to code music?" by Laura Silvanavičiūtė, "ML and the IoT: Living on the Edge" by Brandon Satrom, "Common API Security Pitfalls" by Philippe De Ryck, and "Combatting illegal fishing with Machine Learning and Azure - for less than £10 / month" by Jess Panni & Carmel Eve.

Azure Analysis Services integration: .NET, REST APIs and PowerShell

Explore Azure Analysis Services in custom apps using SDKs, PowerShell cmdlets & REST APIs. Learn to choose the right framework in this guide.

Azure Analysis Services: 8 reasons to integrate in custom apps

Azure Analysis Services isn't just for Power BI dashboards. Here are eight reasons you might want to integrate it directly into a custom application.

Building a secure data solution using Azure Data Lake Store (Gen2)

In this blog we discuss building a secure data solution using Azure Data Lake. Data Lake has many features which enable fine grained security and data separation. It is also built on Azure Storage which enables us to take advantage of all of those features and means that ADLS is still a cost effective storage option!This post runs through some of the great features of ADLS and runs through an example of how we build our solutions using this technology!

NDC London talk: combatting illegal fishing with ML and Azure

In January 2020, Carmel is speaking about creating high performance geospatial algorithms in C# which can detect suspicious vessel activity, which is used to help alert law enforcement to illegal fishing. The input data is fed from Azure Data Lake Storage Gen 2, and converted into data projections optimised for high-performance computation. This code is then hosted in Azure Functions for cheap, consumption based processing.

Import and export notebooks in Databricks

Learn to import/export notebooks in Databricks workspaces manually or programmatically, and transfer content between workspaces efficiently.

Demystifying machine learning using neural networks

Machine learning often seems like a black box. This post walks through what's actually happening under the covers, in an attempt to de-mystify the process!Neural networks are built up of neurons. In a shallow neural network we have an input layer, a "hidden" layer of neurons, and an output layer. For deep learning, there is simply more hidden layers which allows for combining neuron's inputs and outputs to build up a more detailed picture.If you have an interest in Machine Learning and what is really happening, definitely give this a read (WARNING: Some algebra ahead...)!

Create a Power BI workspace in Azure DevOps Pipeline with PowerShell

Automate Power BI workspaces creation & security using PowerShell & Azure DevOps pipelines in a wider DevOps strategy with Azure data platform services.

Using Databricks Notebooks to run an ETL process

Explore data analysis & ETL with Databricks Notebooks on Azure. Utilize Spark's unified analytics engine for big data & ML, and integrate with ADF pipelines.

Endjin is a Snowflake Partner

Snowflake is a cloud native data warehouse platform, that enabled data engineering, data science, data lakes, data sharing and data warehousing. Endjin are very excited to announce our partnership.

Exploring Azure Data Factory - Mapping Data Flows

Mapping Data Flows are a relatively new feature of ADF. They allow you to visually build up complex data transformation sequences. This can aid in the streamlining of data manipulation and ETL processes, without the need to write any code! This post gives a brief introduction to the technology, and what this could enable!

Snowflake Connector for Azure Data Factory - Part 1

Explore the lack of a native Azure Data Factory connector for Snowflake and discover alternatives for integration between these popular platforms.

Announcing Power BI Weekly!

Power BI has become more successful than anyone anticipated. We've decided to spin out the Power BI category from the Azure Weekly Newsletter into it's own publication - Power BI Weekly.

In conversation: .NET, Cloud, Data, AI and endjin

When he joined endjin, Technical Fellow Ian sat down with founder Howard for a Q&A session. This was originally published on LinkedIn in 5 parts, but is republished here, in full. Ian talks about his path into computing, some highlights of his career, the evolution of the .NET ecosystem, AI, and the software engineering life.

Thoughts on .NET, Cloud, AI, ML and teaching software engineers

Ian Griffiths recently joined endjin as a Technical Fellow. We had a long fireside chat, which has been broken down into a 5 part series covering .NET, the Cloud, AI, ML, teaching software engineering, and why he joined endjin.

Branches, builds and modern data pipelines. Let's catch-up!

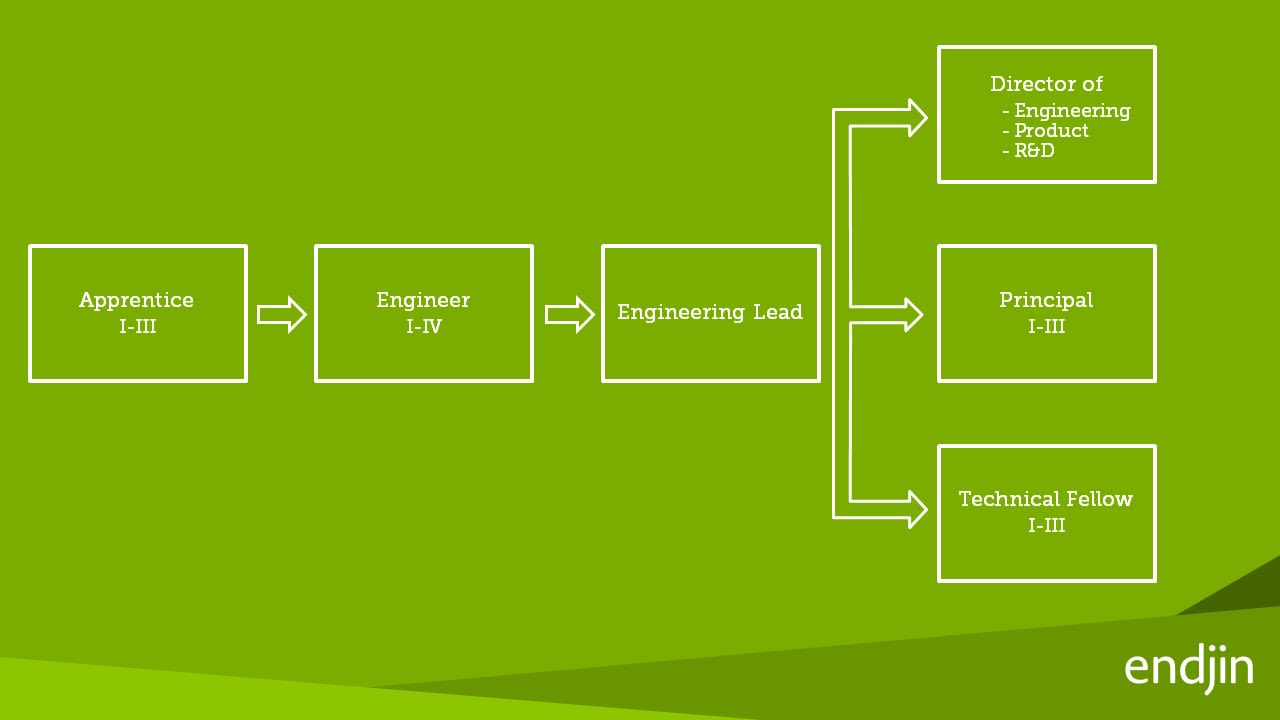

As an apprentice engineer at endjin, you cover a lot of ground, especially at a consultancy which works with the latest and greatest tools to solve its clients' problems. Learn about endjin's Modern Data Platform, which is a culmination of IP, processes and knowledge built from years of implementing high-performance data-driven solutions. Also learn about the types of tools an apprentice gets to use, and the types of things an apprentice learns along the way.

CALENDAR vs CALENDARAUTO for year-on-year date tables

Performing Power BI date table generation with CALENDAR & CALENDARAUTO functions. Learn key considerations for time-intelligence calculations in reports.

Using Python inside SQL Server

Learn to use SQL Server's Python integration for efficient data handling. Eliminate clunky transfers and easily operationalize Python models/scripts.

Snap Back to Reality – Month 2 & 3 of my Apprenticeship

Learn what types of things an apprentice gets up to at endjin a few months after joining. You could be learning about Neural Networks: algorithms which mimic the way biological systems process information. You could be attending Microsoft's Future Decoded conference, learning about Bots, CosmosDB, IoT and much more. Hopefully, you wouldn't be in hospital after a ruptured appendix!

How we set up daily Azure spending alerts and saved $10k

We set up daily Azure spending alerts with Azure Cost Management, catching cost spikes within 24 hours and saving over $10,000 versus monthly billing.

My first month as an apprentice at endjin

Structured apprenticeships provide a great way to build skills whilst getting real-life experience. Endjin's apprenticeship scheme has been refined over years, with an optimal mixture of training, project work, and exposure to commercial processes - a scheme which is designed to build strong foundations for a well-rounded Software Engineering consultant. This post explains the transition from university to an apprenticeship at endjin, including the types of work an apprentice could end up doing, and some examples of real-life learnings from a real-life apprentice.

Power BI DirectQuery report with multiple SQL DBs via Elastic Query

Learn to build a Power BI dashboard using DirectQuery and ElasticQuery across multiple databases with Alice Waddicor.

Welcome to an internship at endjin!

A career in software engineering doesn't need to start with a Computer Science degree. The underlying traits of problem solving, a willingness to learn and the ability to collaborate well can be built in any field. Internships provide a great way to get your foot-in-the-door in the professional world, and arm you with some real-life experience for future endeavours. This post describes an internship at endjin, including the type of work you could be asked to do and what you could learn.

AWS vs Azure vs Google Cloud Platform - Mobile Services

This post compares mobile services from AWS, Azure, and Google Cloud Platform, covering mobile backends, push notifications, analytics, authentication, and device testing capabilities across all three providers.

AWS vs Azure vs Google Cloud Platform - Internet of Things

This post compares the Internet of Things capabilities of AWS, Azure, and Google Cloud Platform, examining device communication, data ingestion, and real-time analytics features.

AWS vs Azure vs Google Cloud Platform - Analytics & Big Data

This post compares analytics and big data services across AWS, Azure, and Google Cloud Platform, covering data processing, machine learning, streaming analytics, and visualization offerings.

AWS vs Azure vs Google Cloud Platform - Database

This post compares database services across AWS, Azure, and Google Cloud Platform, including managed relational databases, NoSQL options, data warehouses, and caching solutions for cloud migrations.

Querying the Azure DevOps Work Items API directly from Power BI

Discover Azure DevOps Work Items features, use RESTful API for insights, and Power BI visualization in our step-by-step guide.

Automating R Unit Tests With Azure DevOps

Many organisations are starting to adopt the R Programming Language for their data science and financial modelling scenarios. But just because the language is being used for modelling, doesn't mean you should write unit tests that can be exercised as part of your CI/CD pipeline. In this blog post Jess Panni demonstrates how you can run R unit tests inside Azure DevOps.

TeamCity MetaRunner: Release Annotations in Azure Application Insights

Meta-Runners allow you to easily create reusable build components for TeamCity, in this post I demonstrate how to create a Meta-Runner to create Azure Application Insights Release Annotations.

Automated R Deployments in Azure

This post explores how to automate the deployment of R models to Azure Machine Learning using reusable scripts that integrate with Azure DevOps and standard ALM processes.

Machine Learning - the process is the science

What do machine learning and data science actually mean? This post digs into the detail behind the endjin approach to structured experimentation, arguing that the "science" is really all about following the process, allowing you to iterate to insights quickly when there are no guarantees of success.

Embracing Disruption - Financial Services and the Microsoft Cloud

We have produced an insightful booklet called "Embracing Disruption - Financial Services and the Microsoft Cloud" which examines the challenges and opportunities for the Financial Service Industry in the UK, through the lens of Microsoft Azure, Security, Privacy & Data Sovereignty, Data Ingestion, Transformation & Enrichment, Big Compute, Big Data, Insights & Visualisation, Infrastructure, Ops & Support, and the API Economy.

Machine Learning - mad science or a pragmatic process?

This post looks at what machine learning really is (and isn't), dispelling some of the myths and hype that have emerged as the interest in data science, predictive analytics and machine learning has grown. Without any hard guarantees of success, it argues that machine learning as a discipline is simply trial and error at scale – proving or disproving statistical scenarios through structured experimentation.

Developing U-SQL: Local Data Folder

This post explains how to find the local data folder location for running U-SQL scripts locally in Visual Studio, which changed between versions of the Data Lake Tools.