SQL Notebooks for Azure Synapse SQL Pools & SQL on demand

As much as consumption based cloud analytics has already lowered the barrier to entry for organisations to take advantage of big data, the addition of notebook-based development significantly delivers decreased time to (business) value, and eliminates waste by improving collaboration and productivity.

The notion of a cross-functional team has been a core ambition of Agile development, and there have been a number of occasions in my career where I've paired with domain experts to translate real world knowlege into a system. Sprint visions, user stories, personas, value-stream maps, and gherkin based executable specifications have all been steps along the way to help synchronise and consolidate the aims and knowledge of the busienss and delivery team.

These have, in my opinion, all been baby steps towards the value that notebooks can deliver, especially in data exploration and experimentation.

Notebooks FTW!

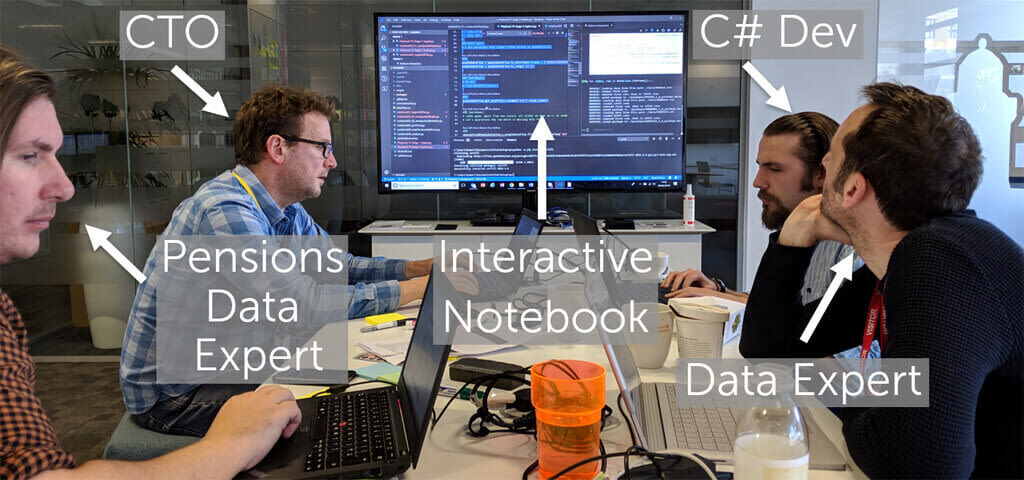

Take the following photo; this was from a data hack we ran for one of our customers last year. We took a 25 year old business process (onboarding pension data) that took on average 12 weeks to complete.

We had an entirely cross functional team that included the CTO, a pension data expert (who ran the onboarding process), a C# developer, a data expert, and me (I'll leave you to guess what value I brought to the team!).

Through the notebook experience, we could pull together all the relevant documentation, diagrams, business rules, regulatory requirements, aims and hypothesis from a business perspective, and start to experiment via code. Because it's highly contextual everyone (regardless of technical ability) could understand what we were trying to achieve and how a snippet of code related to the step in the process. Everyone was bought in, fully engaged, and the collaboration was both highly informative and extremely productive.

The end result was that we re-engineered and optimized the existing process and reduced it from 12 weeks to just under 45 minutes.

Results like this are why I've been such a huge advocate for notebooks across the Microsoft ecosystem; from Polyglot Notebooks, to notebooks in Cosmos DB, Azure Notebooks (which, unfortunately shuts down on 09/10/2020), Azure Machine Learning, Azure Databricks and even Kusto (KQL) notebooks.

Azure Data Studio Notebooks and SQL on demand

So, it's great that Azure Synapse Studio has built-in notebooks, but as it stands these are geared towards Spark workloads. If you are using SQL Pools or serverless SQL on demand then you are out of luck.

Technically, you could use still use the built-in notebooks as Python, Scala and .NET all support SQL connections and querying, but you'd need to be running a Spark cluster to execute the queries, and wrap your SQL in another programming language, which kind of defeats the purpose. I'm sure pure SQL notebooks are something that's on Microsoft's radar but for now we need to find an alternative solution.

Fortunately, Azure Data Studio comes with support for SQL notebooks which you can easily connect to both Synapse SQL Pools and SQL on demand. What's more, the notebooks are standard Jupyter ipynb files which means they can be easily version controlled.

Checkout this video walk-through showing how to use Azure Data Studio notebooks for data exploration using serverless Azure Synapse SQL on Demand.

Want to get started with Synapse but not sure where to start?

If you'd like to know more about Azure Synapse, we offer a free 1 hour, 1-2-1 Azure Data Strategy Briefing. Please book a call and then we'll confirm the time and send you a meeting invite.

We also have created number of talks about Azure Synapse:

- Serverless data prep using SQL on demand and Synapse Pipelines

- Azure Synapse - On-Demand Serverless Compute and Querying

- Detecting Anomalies in IoT Telemetry with Azure Synapse Analytics

- Custom C# Spark Jobs in Azure Synapse

- Custom Scala Spark Jobs in Azure Synapse

Finally, if you are interested in more content about Azure Synapse, we have a dedicated editions page which collates all our blog posts.