Browse our archives by topic…

Big Compute

What is a Data Lakehouse?

What exactly is a Data Lakehouse? This blog gives a general introduction to their history, functionality, and what they might mean for you!

Azure Synapse Analytics: serverless replacing the data warehouse

Serverless data architectures enable leaner data insights and operations. How do you reap the rewards while avoiding the potential pitfalls?

Benchmarking Azure Synapse SQL Serverless with Polyglot Notebooks

New Azure Synapse Analytics service offers SQL Serverless for on-demand data lake queries. We tested its potential as a Data Lake Analytics replacement.

Does Azure Synapse Analytics spell the end for Azure Databricks?

Explore why Microsoft's new Spark offering in Azure Synapse Analytics is a game-changer for Azure Databricks investors.

Azure Oxford talk: combatting illegal fishing for under £10/month

Jess and Carmel recently gave a talk at Azure Oxford on Combatting illegal fishing with Machine Learning and Azure - for less than £10 / month. The recording of that talk is now available for viewing!The talk focuses on the recent work we completed with OceanMind. They run through how to construct a cloud-first architecture based on serverless and data analytics technologies and explore the important principles and challenges in designing this kind of solution. Finally, we see how the architecture we designed through this process not only provides all the benefits of the cloud (reliability, scalability, security), but because of the pay-as-you-go compute model, has a compute cost that we could barely believe!

Building a proximity detection pipeline

Endjin's blog post details their project with OceanMind, using a serverless architecture and machine learning to detect illegal fishing.

Optimising C# for a serverless environment

Tour our OceanMind project using Azure Functions for real-time marine telemetry processing. Learn optimization techniques for serverless environments.

Building a secure data solution using Azure Data Lake Store (Gen2)

In this blog we discuss building a secure data solution using Azure Data Lake. Data Lake has many features which enable fine grained security and data separation. It is also built on Azure Storage which enables us to take advantage of all of those features and means that ADLS is still a cost effective storage option!This post runs through some of the great features of ADLS and runs through an example of how we build our solutions using this technology!

NDC London talk: combatting illegal fishing with ML and Azure

In January 2020, Carmel is speaking about creating high performance geospatial algorithms in C# which can detect suspicious vessel activity, which is used to help alert law enforcement to illegal fishing. The input data is fed from Azure Data Lake Storage Gen 2, and converted into data projections optimised for high-performance computation. This code is then hosted in Azure Functions for cheap, consumption based processing.

C#, Span and async

Explore how ref struct types like Span<T> in C# enhance performance but pose async method challenges, and learn mitigation techniques.

Increasing performance via low memory allocation in C#

Explore techniques for high-performance, low-allocation code in Azure Functions using C#, including data streaming, list preallocation, and Span<T>.

Running Azure Functions in Docker on a Raspberry Pi 4

Running an Azure Function in a Docker container on Raspberry Pi 4 with this step-by-step guide and ready-to-use code for your projects.

Import and export notebooks in Databricks

Learn to import/export notebooks in Databricks workspaces manually or programmatically, and transfer content between workspaces efficiently.

Demystifying machine learning using neural networks

Machine learning often seems like a black box. This post walks through what's actually happening under the covers, in an attempt to de-mystify the process!Neural networks are built up of neurons. In a shallow neural network we have an input layer, a "hidden" layer of neurons, and an output layer. For deep learning, there is simply more hidden layers which allows for combining neuron's inputs and outputs to build up a more detailed picture.If you have an interest in Machine Learning and what is really happening, definitely give this a read (WARNING: Some algebra ahead...)!

Using Databricks Notebooks to run an ETL process

Explore data analysis & ETL with Databricks Notebooks on Azure. Utilize Spark's unified analytics engine for big data & ML, and integrate with ADF pipelines.

Exploring Azure Data Factory - Mapping Data Flows

Mapping Data Flows are a relatively new feature of ADF. They allow you to visually build up complex data transformation sequences. This can aid in the streamlining of data manipulation and ETL processes, without the need to write any code! This post gives a brief introduction to the technology, and what this could enable!

Avoid deployment lock errors: run web & function apps from packages

Fix DLL locking errors in Azure Function deployment by using "deploy from package" option. This runs the function from a package file, resolving issues.

In conversation: .NET, Cloud, Data, AI and endjin

When he joined endjin, Technical Fellow Ian sat down with founder Howard for a Q&A session. This was originally published on LinkedIn in 5 parts, but is republished here, in full. Ian talks about his path into computing, some highlights of his career, the evolution of the .NET ecosystem, AI, and the software engineering life.

Using Python inside SQL Server

Learn to use SQL Server's Python integration for efficient data handling. Eliminate clunky transfers and easily operationalize Python models/scripts.

Snap Back to Reality – Month 2 & 3 of my Apprenticeship

Learn what types of things an apprentice gets up to at endjin a few months after joining. You could be learning about Neural Networks: algorithms which mimic the way biological systems process information. You could be attending Microsoft's Future Decoded conference, learning about Bots, CosmosDB, IoT and much more. Hopefully, you wouldn't be in hospital after a ruptured appendix!

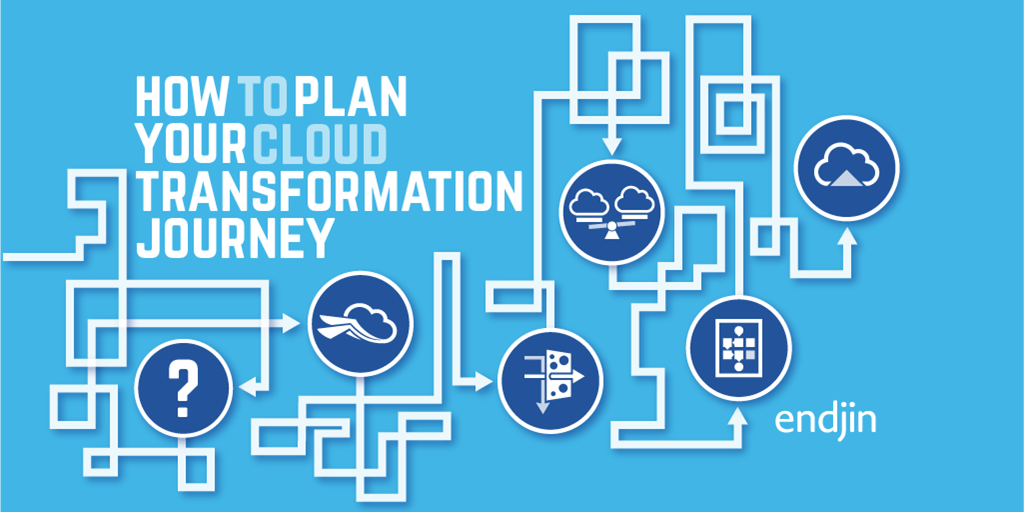

How to plan your cloud transformation journey

We've been helping customers adopt Microsoft Azure since 2010, we have produced a lot of thought leadership to help people think about the steps required, the risk involved and how to plan a successful adoption.

AWS vs Azure vs Google Cloud Platform - Mobile Services

This post compares mobile services from AWS, Azure, and Google Cloud Platform, covering mobile backends, push notifications, analytics, authentication, and device testing capabilities across all three providers.

AWS vs Azure vs Google Cloud Platform - Database

This post compares database services across AWS, Azure, and Google Cloud Platform, including managed relational databases, NoSQL options, data warehouses, and caching solutions for cloud migrations.

AWS vs Azure vs Google Cloud Platform - Compute

This post compares compute services across AWS, Azure, and Google Cloud Platform, covering virtual machines, containers, PaaS offerings, and serverless options for different cloud migration strategies.

Embracing Disruption - Financial Services and the Microsoft Cloud

We have produced an insightful booklet called "Embracing Disruption - Financial Services and the Microsoft Cloud" which examines the challenges and opportunities for the Financial Service Industry in the UK, through the lens of Microsoft Azure, Security, Privacy & Data Sovereignty, Data Ingestion, Transformation & Enrichment, Big Compute, Big Data, Insights & Visualisation, Infrastructure, Ops & Support, and the API Economy.

Azure Batch - Time is Money in Big Compute

Consumption based pricing is a one of the USPs of Cloud PaaS services, but the default settings aren't necessarily optimised for cost. Significant savings can be made from understanding your workload.

Azure ML: experimenting with training data proportions with SMOTE

Azure Machine Learning's SMOTE module helps balance imbalanced training data by generating synthetic samples, improving model accuracy for multi-class classification.

Spinning up 16,000 A1 Virtual Machines on Azure Batch

We recently completed a technical proof of concept to see if the new Azure Batch service could scale to meet the demands of a Big Compute workload.