Creating a data platform capable of monitoring the world's oceans in near-realtime, to prevent illegal fishing & human trafficking.

OceanMind are a not for profit, who provide actionable insights to law enforcement to prevent illegal fishing, human trafficking and illegal salvage operations on war graves and heritage sites.

OceanMind were a Microsoft AI for Earth Grantee in 2019, and have been featured in multiple keynotes over the past year, including Future Decoded 2019, and the 2019 Web Summit.

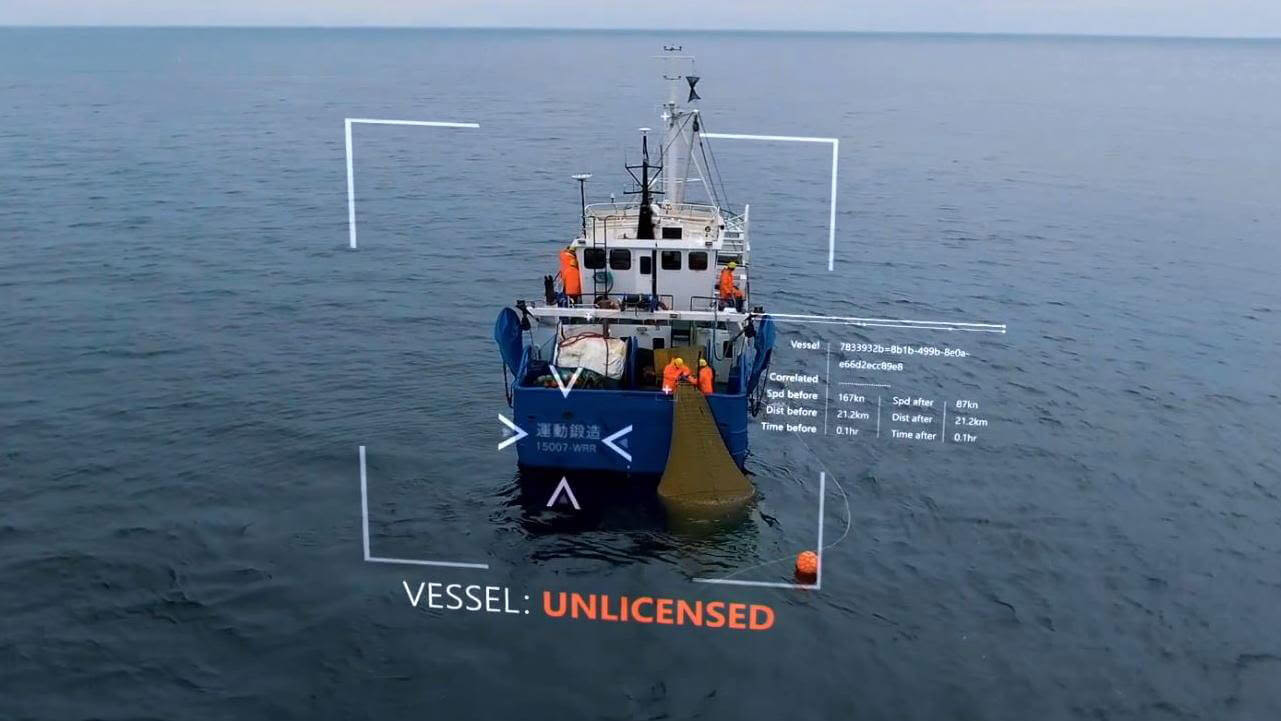

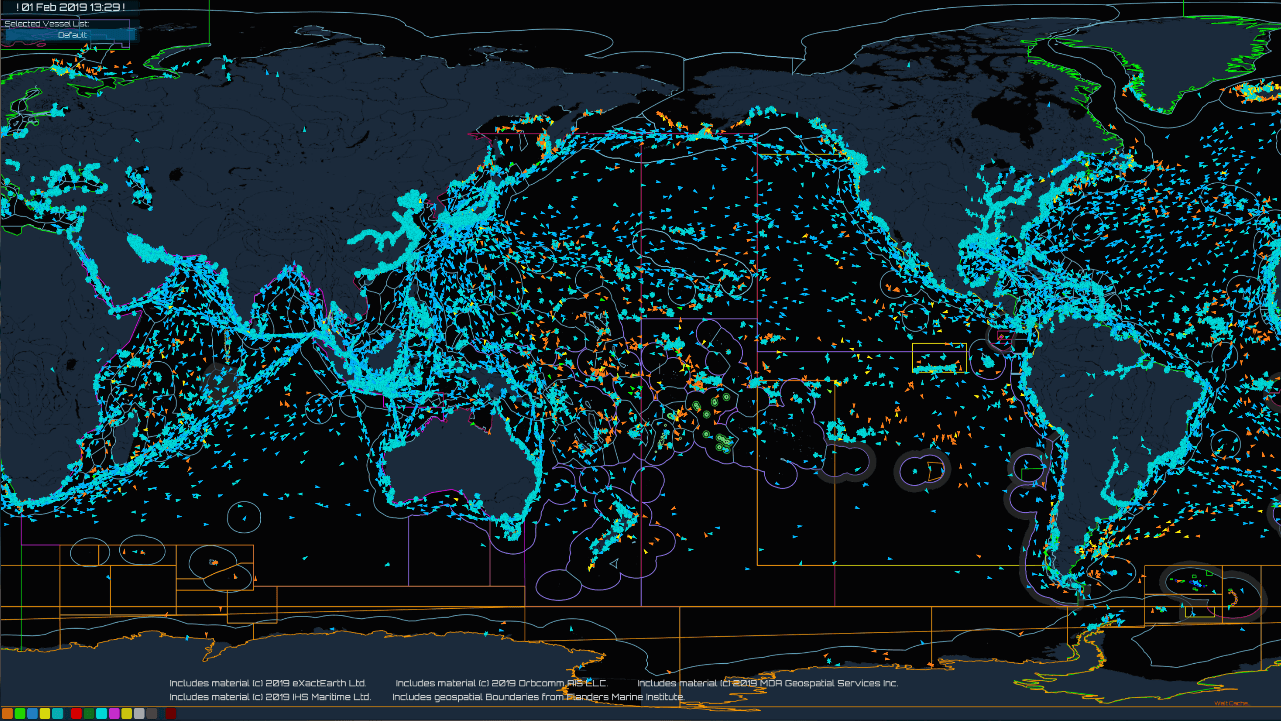

Using vessel telemetry from around the world, they employ complex geospatial analysis and Machine Learning to detect these illegal activities.

The challenge with fisheries, particularly on the global scale, is the sheer amount of data. This...really has been a game changer for our organization. In the past we've been very batch oriented, so we've only had a limited scale that we've been able to apply to the problem. Now we can get more results, more quickly, and save our analysts time.

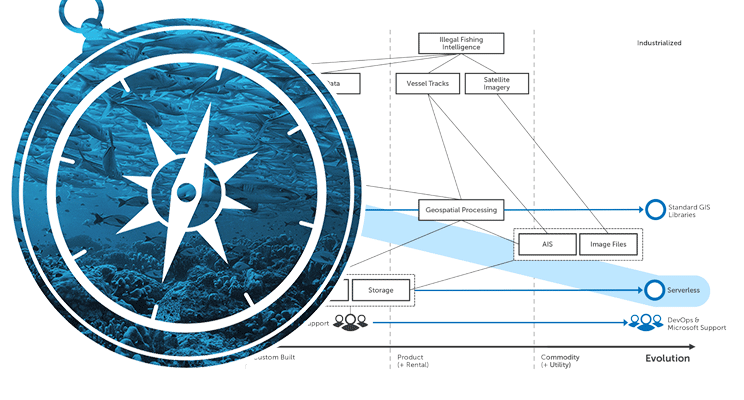

Being a not-for-profit and a small team, OceanMind needed a cost-effective way to enable their team to spend less time on system maintenance and more time on improving the analytical techniques and maximising their impact. Alongside this, they wanted to move away from batched overnight processing towards more real-time analysis. This would allow them to decrease the time taken from activity, to detection, to action. It was decided that they needed to migrate their on-premise systems into the cloud.

OceanMind is using the power of AI and harnessing the world's data about vessels on the seas, analyzing them in real time, and sharing the results with the world's governments to improve the sustainability of the planet's oceans. It shows what technology can do. It shows that we each have a role to play, if we're going to address the great problems that the world puts in front of us.

This was where we came in.

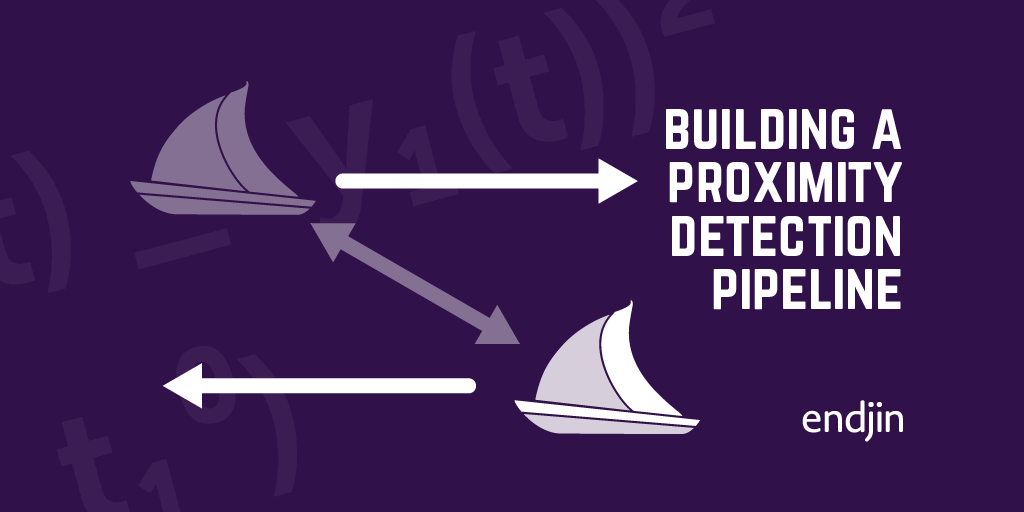

Over the course of three months we designed and built a cloud-facing serverless architecture. We used Azure Functions and Durable orchestration to find ships which had been in contact for a certain amount of time. These events were then used to power the Machine Learning algorithms and detect illegal fishing and other activities.

We introduced secure data structures which protected and segragated the data according to government and compliance regulations. We also employed high-performance compute to increase performance and reduce cost. Using our benchmarking tools we analysed the solution and found that, extrapolating our extensible architecture to support their remaining workloads, the estimated compute cost for the solution came to less than £10 / month.

I love hearing about the way a really small organisation is using technology to scale well beyond the size of the group of the people they have. When people look at the work of OceanMind they think they are looking at the work of a large multinational organisation, but instead it's a small team of dedicated, hardworking individuals.

As a result, OceanMind are now able to carry out their analysis in close to real time. Due to the reliability introduced by the cloud, far less time is spent on fire-fighting. This allows more time for innovation and exploration of the huge wealth of Microsoft Machine Learning technologies. They are also able to operate well within their budget constraints due to the cheap running cost of the serverless solution we designed. In this way endjin took a complex problem and constructed a solution which was optimised for the customer's specific needs.