AI Strategy: Think Top-Down, Experiment Bottom-Up

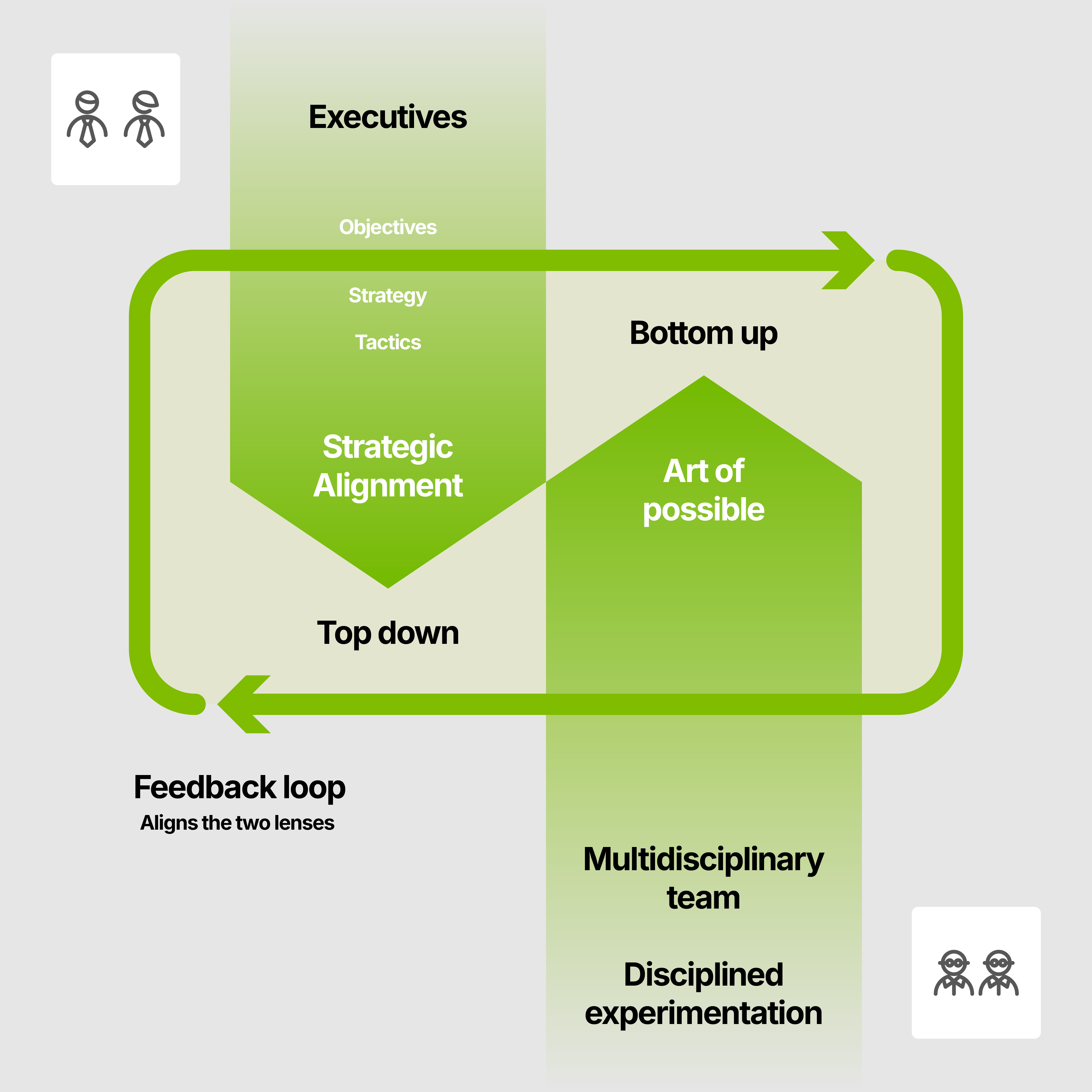

TLDR; Most AI initiatives fail because organisations pick one lens. Top-down planning without bottom-up experimentation produces disconnected roadmaps; bottom-up experimentation without top-down direction produces personal productivity wins that never scale. Running both simultaneously, with a feedback loop between them, is what separates the 20% that succeed from the 80% that don't.

This blog is for leaders who have either watched a previous AI initiative stall, or are about to launch one and want to avoid the same pitfalls. If you find your organisation stagnating when it comes to taking meaningful steps towards embracing AI — this is for you.

Most organisations approach AI strategy from one direction only:

- Some wait for a grand plan to be created and handed down for implementation, or;

- Others unleash enthusiasm without direction, hoping something transformative will emerge from the activity.

Both approaches tend to fail.

The organisations making real, durable progress with AI have discovered that you need to move in both directions at the same time. Top-down, to ensure that everything connects to goals that actually matter to the business. Bottom-up, to surface what is genuinely possible with today's tools. At the intersection of these two approaches you will discover opportunities that no amount of strategic planning could have anticipated.

This is not a new idea. But the arrival of agentic AI has made it more urgent than ever.

What has changed: from chat to agentic

The reason this dual-lens approach has become more urgent is that the technology has shifted in ways that many organisations have not yet absorbed.

Two years ago, AI tools were primarily conversational. You prompted, the model responded, you reviewed the output, accepted it or re-worked the prompt and tried again. The human was in the loop at every step. This made it relatively easy to experiment, but also limited the scope of what could be achieved.

Around Christmas 2025, a new generation of "agentic AI" arrived with Claude Opus leading the way. They unlocked a new paradigm: rather than answering questions, the AI could now be given a goal (e.g. tidy up my inbox, generate this report, research this set of opportunities) and then use multiple tools to act autonomously to complete it, returning to the human only when a decision or approval is genuinely needed. The human becomes a conductor rather than a performer.

This matters for strategy because it changes the economics of what is feasible. Tasks that previously required dedicated engineering or analyst time can now be completed in a fraction of the time by a well-configured agent. The bottleneck has shifted from "can we build this?" to "have we identified the right problem to solve and is the environment ready to enable us to use AI to solve it?"

Both of those questions are answered by the dual-lens approach: the top-down lens identifies the right problems, and the bottom-up lens surfaces the feasibility realities.

A conversation we keep having

We have been having a version of the same conversation with clients across a range of sectors recently. It goes something like this:

First the optimist - someone in the business (who has seen what modern AI tools can do) wants to get people together and start experimenting. They are frustrated by the pace of progress and worried that the organisation is falling behind. Their instinct is right.

Then there is the pessimist - someone else who is pushing back. Not because they are opposed to AI, but because they have seen this before. A previous wave of enthusiasm driven by technology X (replace X with any of: blockchain, chatbots, home assistants, cloud, RPA, big data, IoT or the metaverse), where a working group met for a few months, explored a few ideas, and then quietly dissolved. Nothing changed. Their caution is also right. They want to take a more measured approach.

What makes this dynamic interesting is that both people are responding to a real risk. The first is worried about analysis paralysis. The second is worried about wasted energy. And yet the tension between them often results in exactly the outcome both wanted to avoid: delay, confusion, and a business that is neither planning well nor learning fast.

The resolution is not to choose one side. It is to run both simultaneously with a clear understanding of what each is trying to achieve.

The top-down lens: goals before tools

Later in this blog we examine why 80% AI projects fail and it is striking that the top 3 root causes have nothing to do with technology: the problem is not well defined, there is no executive sponsor or there is no intent to transform operating model to take advantage of AI; all organisational failures, not technical ones.

This is why the top-down lens is critical. Without it, even the most energetic experimentation will produce personal productivity gains at best, and expensive dead ends at worst.

Don't create an AI strategy. Instead, revisit your business strategy through the lens of what AI now makes possible: make it "AI infused".

The framing we find most useful here is the OST model: Objective → Strategy → Tactics.

The objective answers the question: what is the organisation actually trying to achieve? Not "use AI more" because this is a tactic masquerading as an objective. Real objectives sound like: grow revenue in new markets, improve the efficiency of the new customer onboarding, or reduce the time it takes to respond to new business opportunities. These are measurable. They existed before anyone mentioned AI.

The strategy then asks: what is our approach? Are we working within existing tooling constraints, or are we prepared to introduce new platforms? What does success look like in 12 months?

The tactics identify the specific tools, agents, models and workflows. They should sit last. Choosing your tactics before you have defined your objectives is one of the most reliable ways to waste significant time and money on AI.

In practice, we find that organisations frequently conflate these levels. When people say they want an "AI strategy," they often mean they want clarity on objectives: what it is they are actually trying to do, and why it matters. Getting explicit about that distinction is frequently the most valuable conversation a leadership team can have.

One good example of the OST framework is what AstraZeneca communicated at Connected Data London 2025, it's structured as objective, strategy, tactics:

Our Bold Ambition is to be pioneers in science, lead in our disease areas, and transform patient outcomes.

By 2030, we will:

- Deliver 20 new medicines

- Be an $80bn company

- ... and sustained growth thereafter.

To make our ambition a reality, we focus on:

- Advancing the next wave of science and technologies to deliver differentiated treatments

- Supporting our medicines and investment into our pipeline to transform care and maximise patient benefits

- Investing in the right teams and ways of working, keeping sustainability at our core

In contrast, here's the anti-pattern for an OST aligned strategy:

CEO: "We need an AI Strategy"

CTO: "We're adopting Copilot"

Employees: "What am I supposed to do with this?"

What good top-down planning looks like

Good top-down AI planning does not require a lengthy programme of work before anything else can begin. It requires a clear and honest answer to a small number of questions:

- What are the two or three areas of the business where improvement would have the most significant impact?

- In each of those areas, what does the process actually look like today and where are the bottlenecks?

- Is the bottleneck a lack of capacity, a lack of speed, or something else?

- Who in the executive team owns this outcome, and are they prepared to provide active sponsorship?

The last question matters more than it might appear. An executive sponsor provides the air cover to push through organisational resistance, the authority to make operating model changes, and crucially, the permission for early ideas to fail without the team being penalised — provided the failure produces learning that gets shared. Without that sponsorship, even well-designed experiments tend to stall.

The bottom-up lens: showing the art of the possible

Top-down planning has a structural limitation. It depends on people knowing what problems exist and what solutions might be feasible. In practice, neither of those things is as clear as it appears from a strategy document.

People often do not know a problem exists until they start using a tool and discover a better way of working. Furthermore, decision-makers frequently cannot accurately assess what AI can and cannot do because their reference point is what it could do twelve or twenty-four months ago which, given the pace of change, is a very different thing.

This is where the bottom-up lens is indispensable.

The prompt-a-thon era is over. The question is no longer "what could we do with AI?", it is "what business processes are inefficient or unscalable, and how do we apply AI to fix them?"

The language of "prompt-a-thon" was appropriate when AI was genuinely novel and people needed permission to explore unfamiliar tools. That era has passed. The models have now evolved to the point where they are generally capable of getting the outcome that you wanted without too much extra guidance. What remains is the application question. Answering that requires real business context, not a blank-canvas ideation exercise.

What bottom-up experimentation looks like today is closer to a community of practice: a small group of motivated people who are in touch with the state of the art (SoTA), actively building things, developing new skills and new ways of working. The most important aspect is that they share: their thinking, their mindset, the scenarios they explored, failures, wins and lightbulb moments.

Everything they work on should have a clear connection back to the strategic agenda. An experiment that produces a useful capability but has no link to an organisational objective is interesting but not durable. An experiment that demonstrates a specific bottleneck can be eliminated, that links directly to a top-level goal, is investable.

What bottom-up experimentation achieves that planning cannot

There are three things that only hands-on experimentation can surface:

- Feasibility signals. An idea that looks strong on paper may hit an insurmountable obstacle the moment someone tries to implement it, most commonly, poor data quality. Discovering this through a small contained experiment is far cheaper than discovering it six months into a funded programme.

- Opportunity discovery. Some of the most compelling AI use cases emerge when someone, in the process of solving a specific problem, stumbles into a capability that neither they nor their leadership team had anticipated.

- Education through demonstration. Leaders who have not worked directly with modern agentic tools consistently underestimate what is now possible. Showing is more effective than telling: a live demo of an agent completing in seconds what would take a human several hours changes the conversation at the strategic level in a way no slide deck can.

Why most AI initiatives fail, and how this approach changes the odds

Drawing on our own experience, backed up by research from RAND, MIT and McKinsey there is consensus on a single, uncomfortable finding:

AI projects fail for organisational reasons, not technical ones. The models work. The infrastructure exists. What breaks is everything around them.

Here are the ten most common root causes, which rarely occur in isolation. We have grouped them by which lens of the dual-lens approach is best placed to surface them early.

Failures the top-down lens prevents:

- Problem not clearly defined: people embark on the project without a clear view of the goal or success criteria.

- No executive sponsorship: nominal endorsement without genuine ownership. Nothing changes because no one with authority has a stake in the outcome.

- Workflow not redesigned: AI is bolted onto existing processes rather than used as the occasion to rethink how work gets done. McKinsey identifies this as the single strongest predictor of value, yet only 20% of organisations do it.

- Technology-led, not problem-led: adopting the latest model or platform before establishing whether it solves a real problem more effectively than the current approach.

A failure that needs both lenses working together:

- Cultural and organisational unreadiness: the technology works, but people don't trust it, don't understand it, or have no incentive to change how they work. Integration, compliance, and change management complexity are consistently underestimated. Top-down sponsorship signals that change is real; bottom-up demonstration shows people what "good" looks like in practice.

Failures the bottom-up lens surfaces early:

- Data readiness used as a blocker, not a starting point: fragmented or ungoverned data is real, but it is more often used as a reason to do nothing than as a problem to solve. AI does not require perfect data. Start with the use cases where the data is good enough; let early experiments expose which data problems matter most, and prioritise from there.

- Pilot purgatory / failing slowly: sunk cost bias keeps projects alive long after the evidence says otherwise. No pre-agreed criteria for when to stop.

- Building instead of buying: internal builds succeed roughly half as often as purchasing specialist tools, yet many organisations default to building, particularly in regulated sectors.

- Technology not ready: the supporting infrastructure is not in place, or the AI is available but is not yet capable of solving this specific problem.

- Outputs not verified: hallucinated or incorrect content acted upon without adequate checks. Governance treated as a compliance task rather than a design principle.

The dual-lens approach is specifically designed to surface each of these failure modes early, ideally before significant time, energy and budget have been expended.

If you have defined clear objectives and secured genuine executive sponsorship before any experimentation begins, you have eliminated the two most common causes of failure before you have started. And small, focused experiments will reveal data, capability and technology limitations at minimal cost, long before they become expensive problems. The goal is not to succeed every time; it is to learn as quickly and cheaply as possible, and to efficiently find the 20% of ideas worth investing in with confidence.

The cheapest thing you can do is talk. The most expensive thing you can do is build. Fail ideas on paper before you commit engineering resource.

This reframing is important. In our experience, organisations that treat early failure as a sign that the approach is not working are applying the wrong success criteria. Early failure of an idea is a success: it has released resources for something more promising. The organisations that make real progress are those that have built the discipline to kill bad ideas quickly, and the structures to identify good ones before they are buried under the weight of everything else.

The incubator model: protecting the work from cultural friction

In many organisations, bottom-up experimentation runs into cultural resistance because the established culture has developed strong immune responses to anything that challenges the existing way of working. The resistance is rarely explicit. It manifests as lack of engagement, approval processes that move slowly, and a quiet failure to adopt new ways of working.

This dynamic is framed with clarity by Vijay Govindarajan and Chris Trimble's The Other Side of Innovation published in 2010. Every established organisation runs what they call a Performance Engine — the systems, processes and people optimised for repeatable, predictable execution of today's business. The Performance Engine is what pays the bills. But its instinct, when faced with anything that disrupts that repeatability, is to push it away. Innovation and ongoing operations, they argue, are inevitably in conflict.

Govindarajan and Trimble's prescription is to set up what they call a Dedicated Team, we have used the term incubator to describe the same idea:

- Small: no more than four to six people, senior enough to have credibility and access, focused enough to move quickly.

- Cross-functional: custom-built for the initiative, combining business domain expertise with technical capability: fueling the interactions where the hard problems get solved.

- Mandate-driven: connected explicitly to one or two strategic objectives, so the work is always anchored to something the business has said it cares about.

- Protected: insulated from the day-to-day pressures and approval cycles that slow down mainstream teams.

- Effective communicators: capable of reporting learnings back to the wider organisation in an engaging, constructive way. A primary output is knowledge, and demonstrating that change is possible.

Crucially, this is not a walled-off skunk works project. Govindarajan and Trimble are emphatic that the Dedicated Team must work in active partnership with the Performance Engine, drawing on its expertise, infrastructure and customer relationships. The incubator is a different operating model running alongside the main business, not a parallel universe disconnected from it. This is also not a permanent structure: it is a mechanism for generating the proof points that allow the broader organisation to move forward with confidence.

Putting it together: a practical starting point

For an organisation beginning this journey, or restarting after a previous initiative that did not gain traction, the following sequence tends to work well.

Start with a short, honest conversation at leadership level about the business. What are the two or three things the organisation most needs to be true in three years that are not true today? Where are the constraints on growth, efficiency, or resilience? This conversation should avoid mentioning AI at all. Its purpose is to establish the objectives (think OST!) that will anchor everything that follows.

Identify a small group of motivated experimenters. People with the best combination of business insight, technical curiosity, and the judgment to connect what they discover to what the organisation actually needs. The best people tend to be busy generating revenue, but the truth is you want to scale and automate what makes your rainmakers successful. Taking them away from the "day job" to contribute to disciplined experimentation with AI is a significant decision, but it also signals strategic intent. Give them a clear remit: to explore what is possible, connect it to objectives, and a commitment to share what they learn.

Create a feedback loop between the two. The experimenting group should be reporting back to the leadership regularly. Not to present polished findings, but to share raw discoveries: what worked, what failed, what surprised them, and what they now believe is possible that they did not before. These discoveries should be able to reshape the strategic agenda, not just be assessed against it. Be prepared for surprises that may lead to a significant strategic "pivot".

Kill ideas early and often. Evaluate each emerging idea against the objectives. Does it address a clearly defined problem? Is the data in good enough shape to support it? Is there an executive owner who will champion adoption? If the answers are no, retire the idea and move on. Capture why each idea was killed in a lightweight log — this prevents the organisation rediscovering the same dead ends six months later, and gives executive sponsors visibility of the funnel rather than just the survivors.

Invest in the 20%. When an idea passes these tests and it addresses a real problem and has a credible path to adoption, commit to it properly with a dedicated team, empowered with budget, clear objectives, a realistic timeline, and an executive sponsor who is prepared to do the work of organisational change as well as technology delivery.

The dual lens in practice

The organisations we work with that are making the most durable progress with AI are not the ones with the most sophisticated strategies, the most active experimentation programmes, the biggest budgets or who have simply invested in the latest AI tools. They are the ones that have built an effective "top down, bottom up" approach.

Top-down without bottom-up: produces a strategy that isn't deliverable or optimised. The idea of a plan, but without the "how". Disconnected from what is actually possible. Not tested against reality.

Bottom-up without top down: is a "solution looking for a problem". It produces impressive demonstrations that do not accumulate into business transformation, with leadership asking "What did we actually get for all of this?".

Top-down and bottom-up creates something more valuable than either can produce alone: an "AI infused" strategy enabled by a learning system that gets smarter about where to invest, faster at identifying what will not work, and increasingly capable of delivering the outcomes that the organisation actually needs.

The technology is ready. The question, as always, is whether the organisation is.

If you are navigating this challenge and would like to explore how the dual-lens approach could apply to your organisation, our 90-minute Data Strategy Briefing provides a practical starting point. And our Data Product Canvas offers a structured way to evaluate the portfolio of ideas that bottom-up experimentation will generate — helping you identify the 20% worth investing in before you commit significant resources to them.