5 lessons learnt from using Power Automate

TL;DR

- 1. Give your steps descriptive names

- 2. Organize with scopes

- 3. Always use solutions

- 4. Use child flows

- 5. Keep environments separate

We recently completed a project for a client that used Power Automate (Microsoft's low-code platform, previously called Microsoft Flow) for orchestrating a daily business process.

The platform is based on creating flows for automating processes, where each flow consists of triggers and actions (a.k.a. steps). There is a rich library of built-in connectors, especially geared around integration with other parts of the Microsoft 365 and Azure ecosystems, which was in part what made it an attractive choice for this particular project.

In this blog post, I will list out some of the lessons that we learnt in the process of building the solution for how to get the best out of Power Automate.

1. Give your steps descriptive names

One of the benefits of building flows in Power Automate is how simple it is to add steps to the flow, and you can quickly build up a complex flow. Unfortunately, by default, new steps added to the flow are given the name of the action type (with a number appended if you have more than 1 instance of the action type).

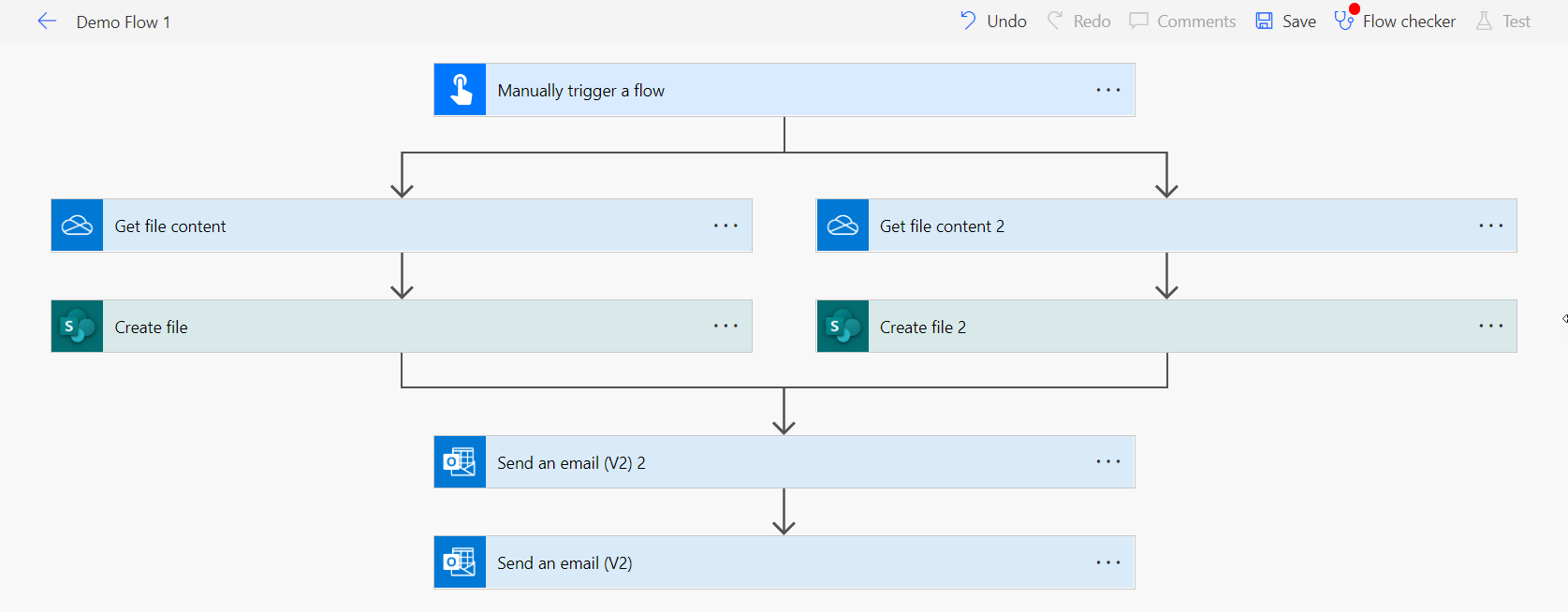

So, without any changes, you can end up with flows that look like this:

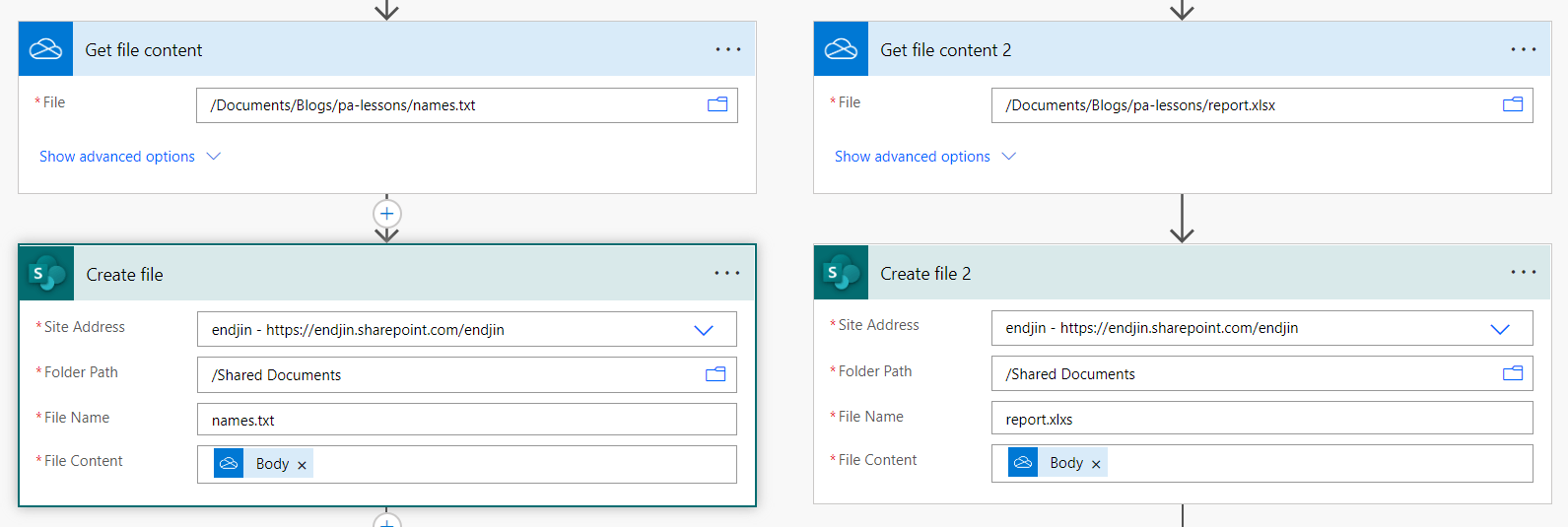

It's not obvious exactly what any of the steps are doing without drilling down into them and looking at the step configuration. As an example, we can see here which files and folders the 'Get file content' and 'Create file' actions are actually dealing with by expanding them:

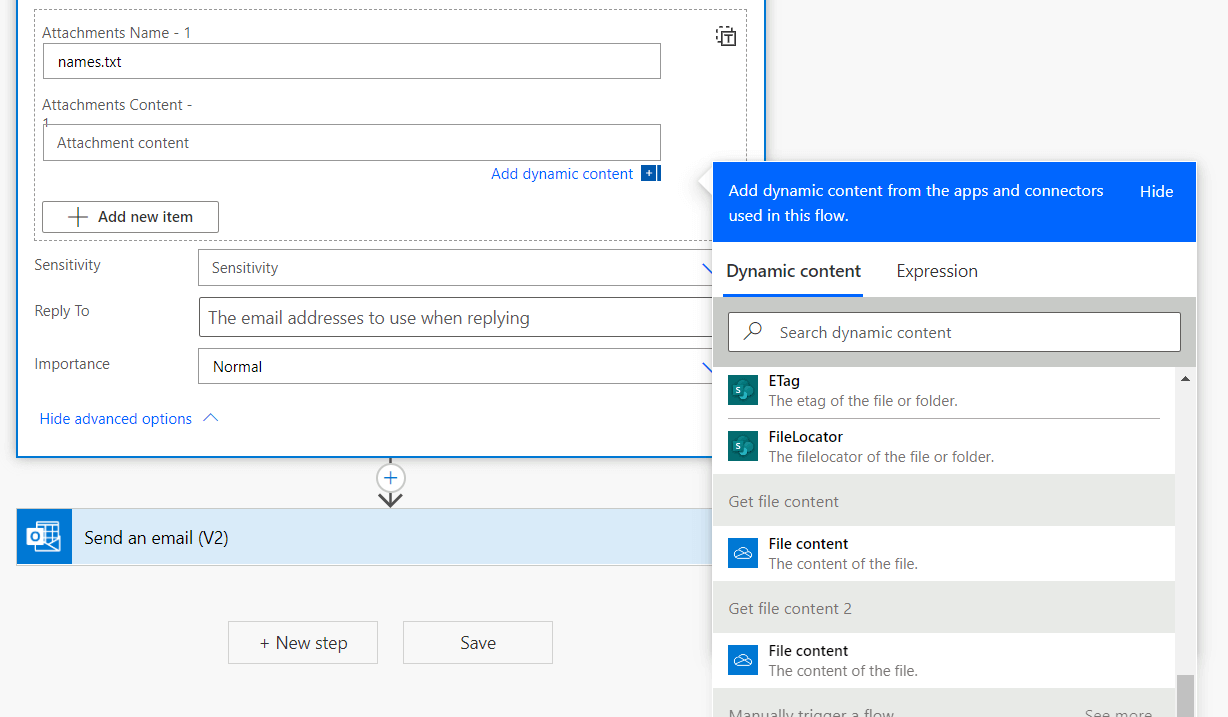

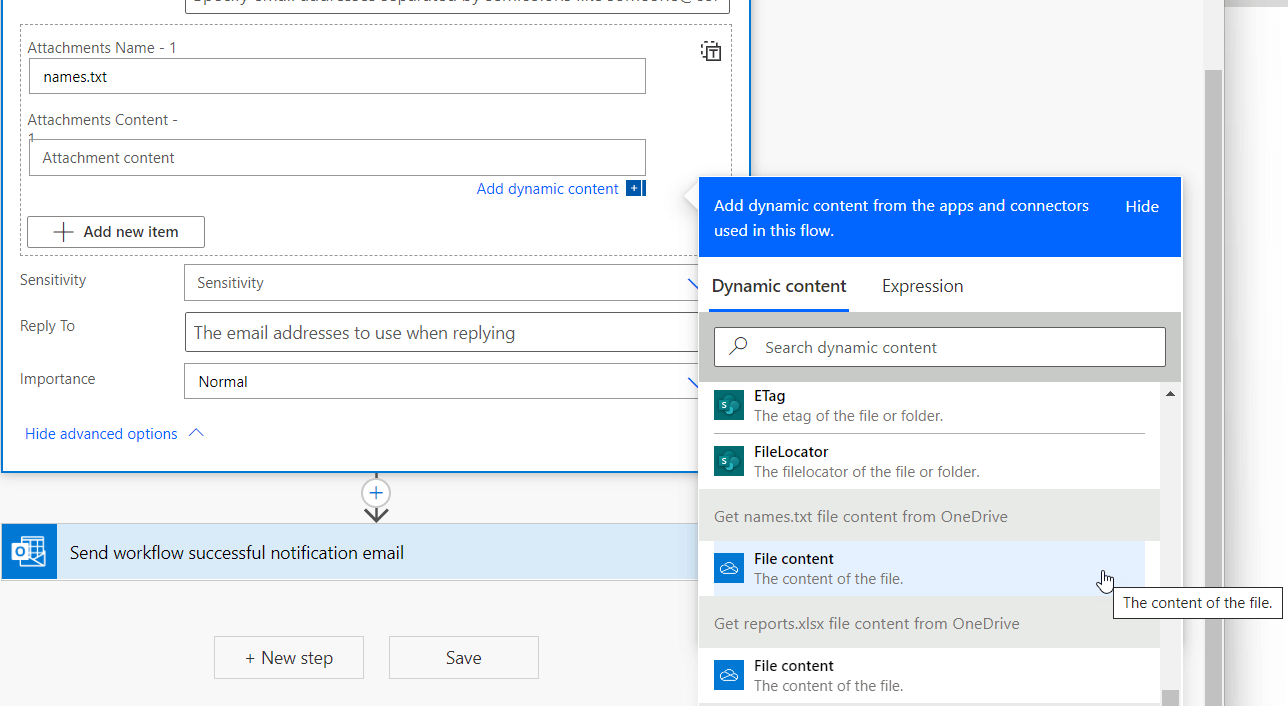

Having to do this makes it difficult both when monitoring flows runs and developing the flow. For instance, suppose we want to use the output from getting the names.txt file content as a dynamic input for another step:

It's not obvious without going back to the details of previous steps to know which 'File Content' to choose.

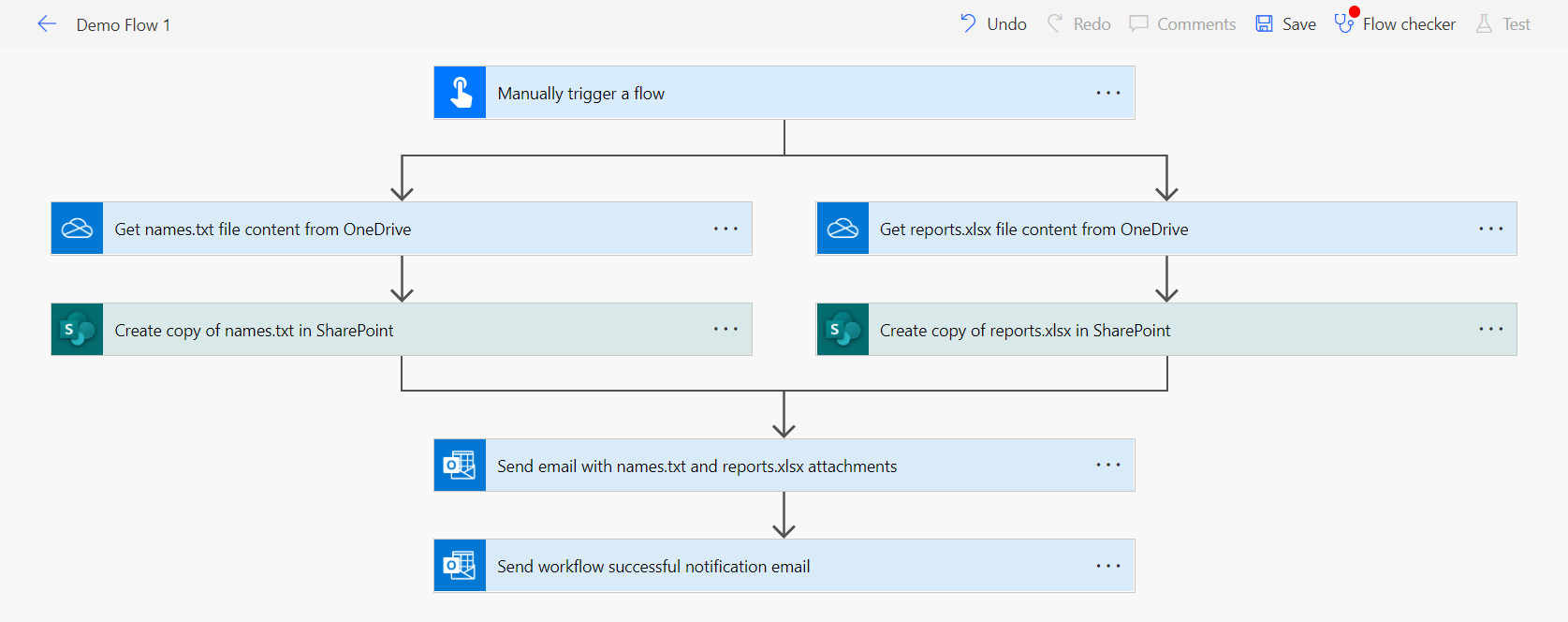

Now, if instead of using the default step names, we always rename the steps with a useful description of what the step is doing, you can see that the intent of the flow becomes much clearer:

And it becomes easier to pick the right dynamic content:

One thing to be aware of when renaming steps is that if you have any existing expressions in your flows that make reference to an output of the renamed step, then you will have to update the expression with the new name.

2. Organize with scopes

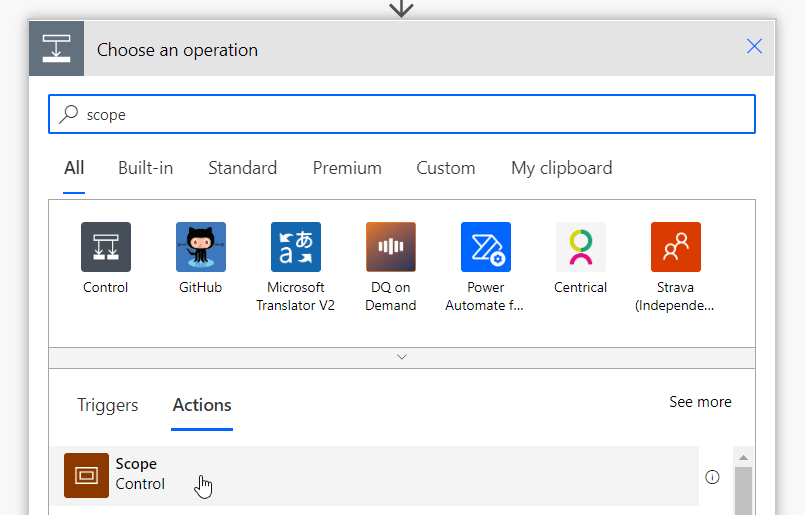

Another way to better express the intent of your flows, whilst also keeping them clean and easy to read, is to use the scope action (which is part of the built-in control actions). This is essentially a named group for your steps.

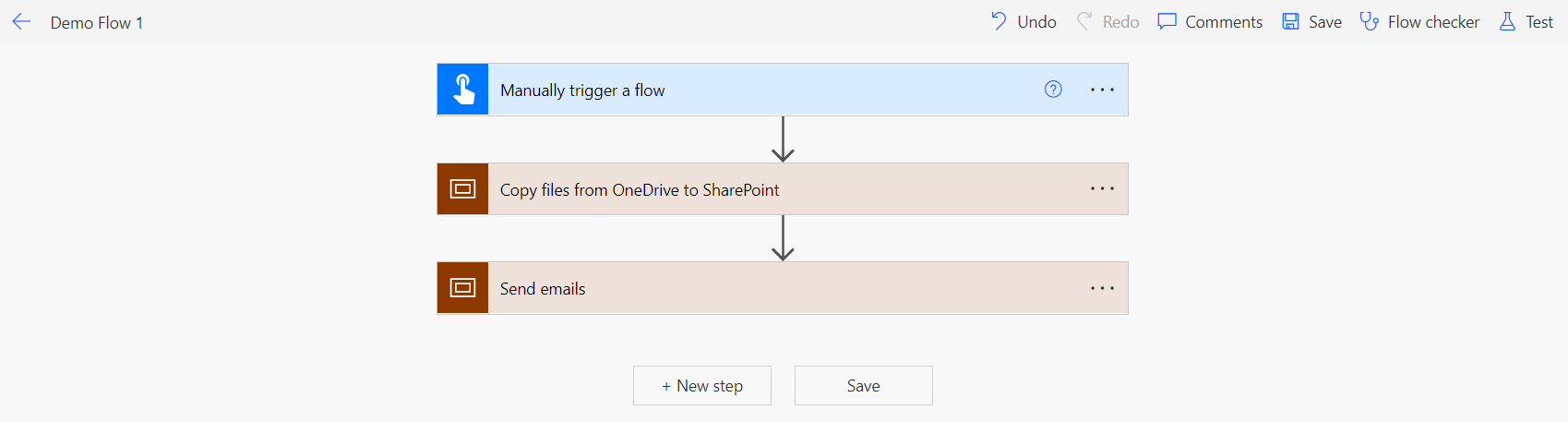

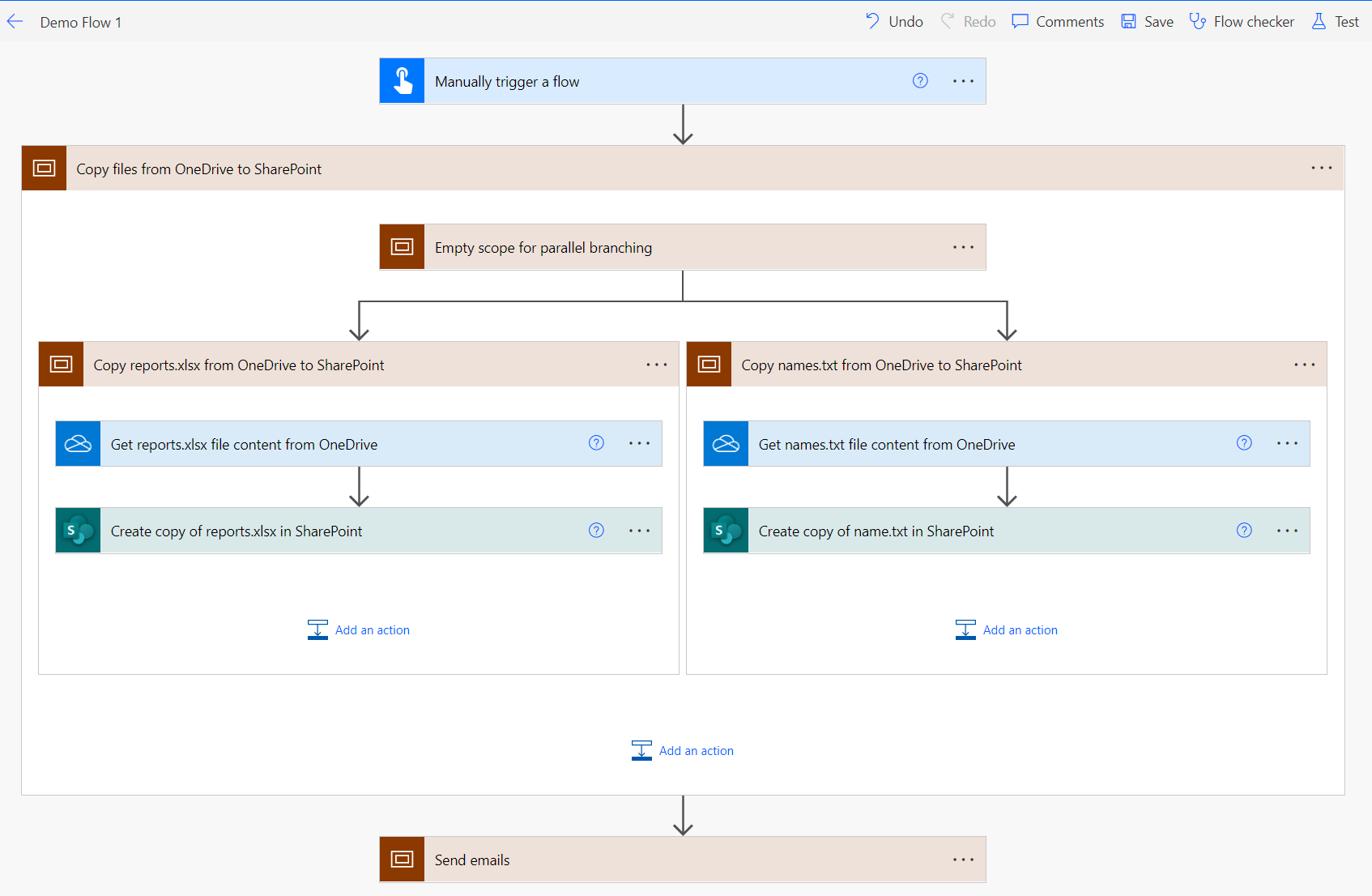

Taking the previous example, we could use scopes to organize the steps into logical groups:

We can even nest scopes:

(Note: you may have noticed that the 'Empty scope for parallel branching'. Power Automate does not allow you to create parallel branches inside the scope unless there is step to branch from, so we create an empty scope here for that purpose)

Other benefits of using scopes include that it makes it easier to move a group of steps around the flow, or copy a group a steps (although you may want to consider a child flow if you are copying multiple steps, which we will look at later in the post).

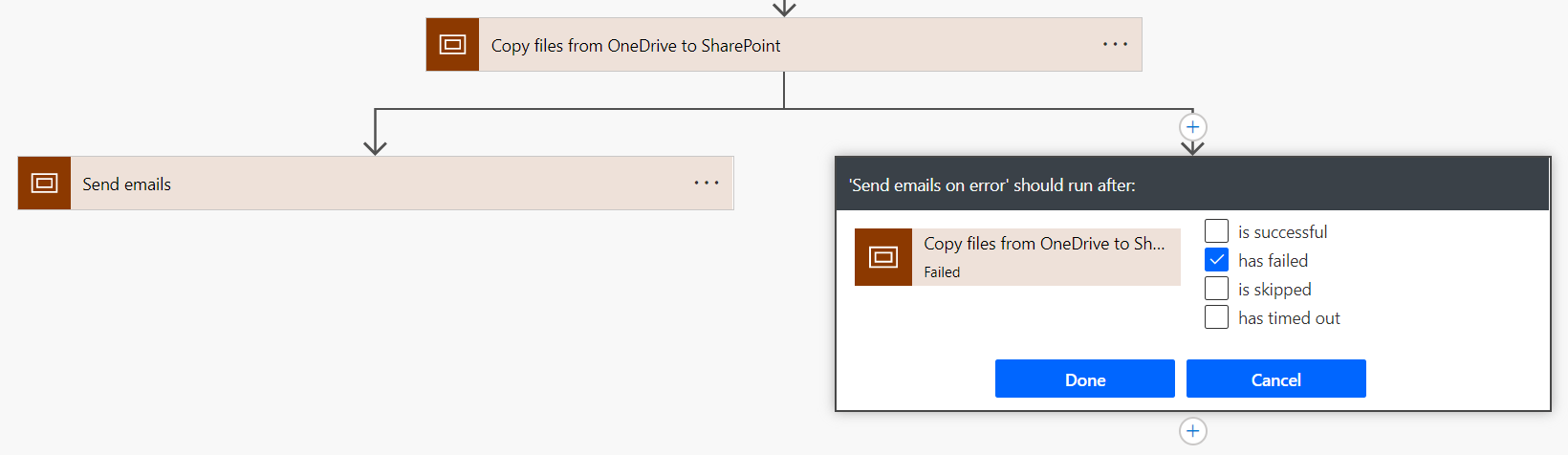

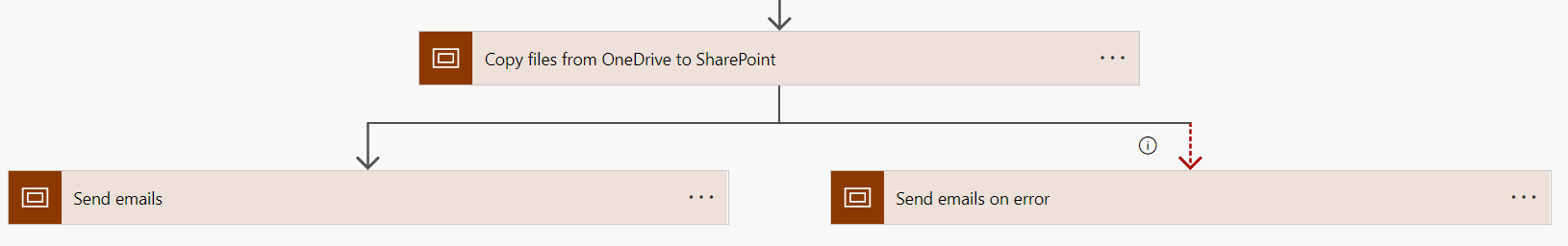

It also helps with error handling - you can set an error handler to run based on the scoped group of actions (e.g. if any action within the scope fails). In this example we send different notification emails if the previous scope fails:

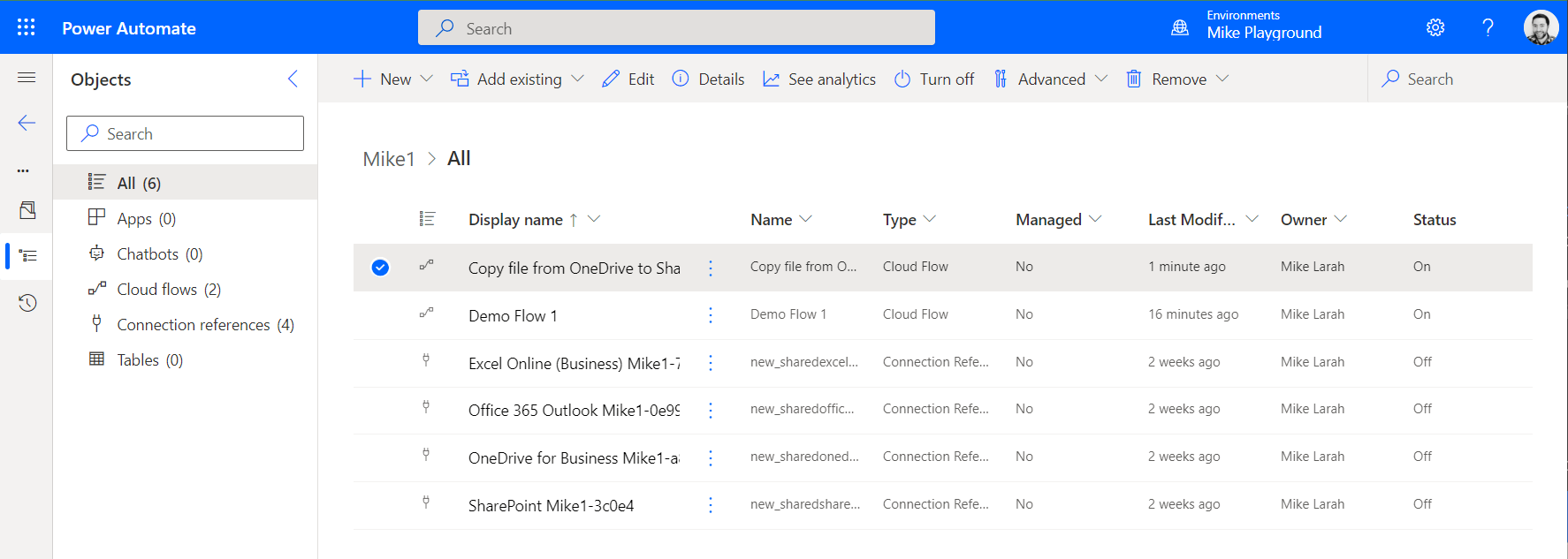

3. Always use solutions

Power Automate has feature called Solutions, which is essentially a way to group together logically associated flows and other components (such as connection references and environment variables).

There are some advantages that come with using solutions.

It enables you to create and call child flows from other flows (see Use child flows).

It also means you have a portable bundle for your flows (i.e. you can export/import the solution as a whole) which enables more sophisticated ALM processes. Exporting/importing can be done through the Power Automate portal but also using the Power Platform CLI. In turn, Microsoft also provide tasks for Azure DevOps (Microsoft Power Platform Build Tools) and GitHub Actions (Power Platform Actions) that use the CLI internally, which enables you to create CI/CD pipelines for managing deployments of your flows across environments (see Keep environments separate).

Flows that are created as part a solution are special 'solution-aware flows'. We made the mistake of starting the project without using a solution, and it is not always simple (or possible) to migrate existing flows into a solution. So, the recommendation would be to start your project using solutions for anything other than the most basic of flows.

4. Use child flows

As you are building your flows, it is possible you will find that you have repeated patterns of steps or similar behaviours that you want to replicate, either within a flow or across flows. Power Automate does have a (currently in-preview) way of copying steps which can help with this, especially when using scopes:

But this approach isn't very DRY. It would be better if we could create parameterized, reusable groups of steps that can be called from within your flows - which is exactly what we can do with child flows.

Solutions are a prerequisite for using child flows, as mentioned above.

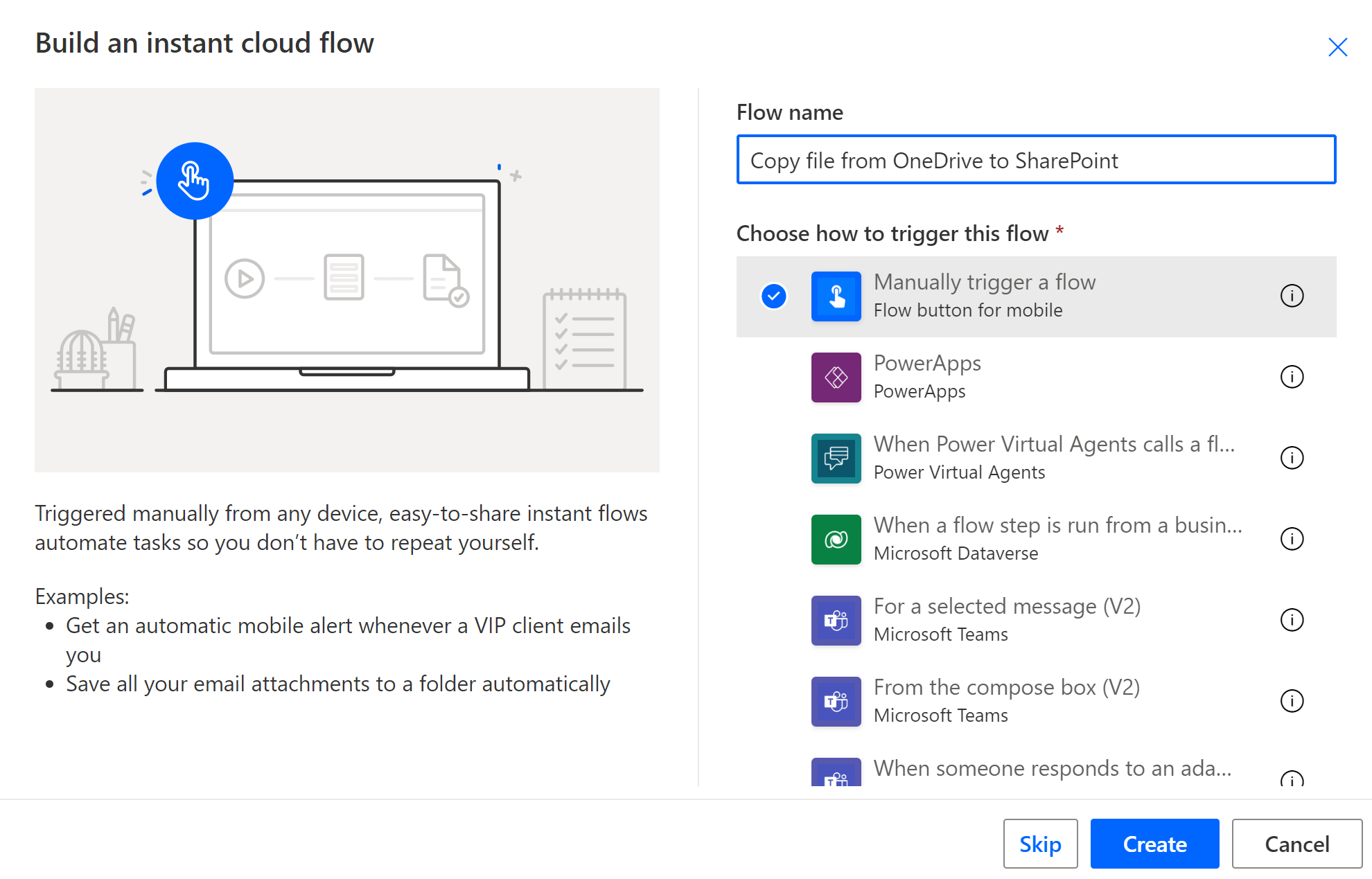

Create the child flow by creating a new instant cloud flow in the solution with a manual trigger:

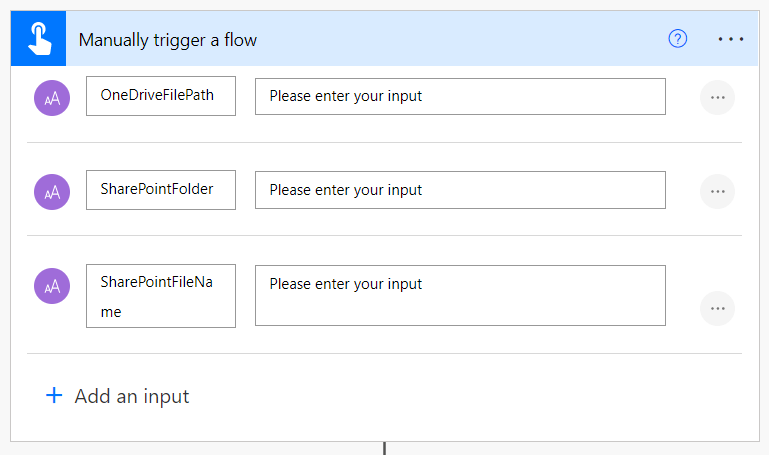

Inside the manual trigger you can add inputs which will act as the parameters to your child flow:

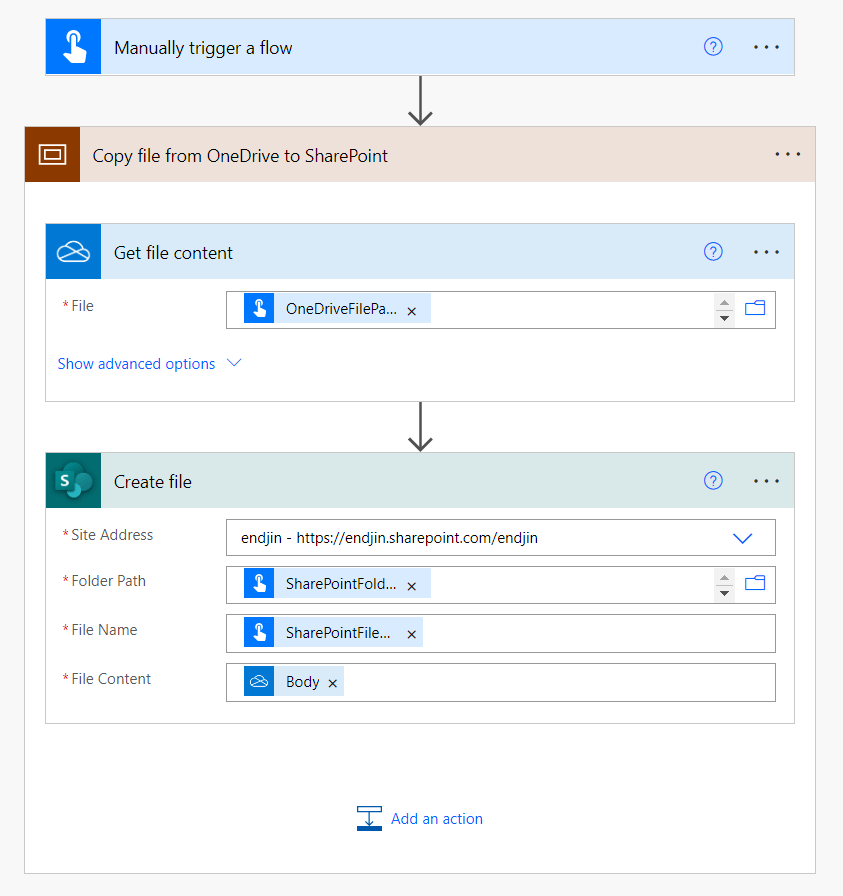

You can then add the reusable steps into your flows, referencing your parameters (this is also where the copy/paste functionality can come in useful if you are refactoring the steps from an existing flow):

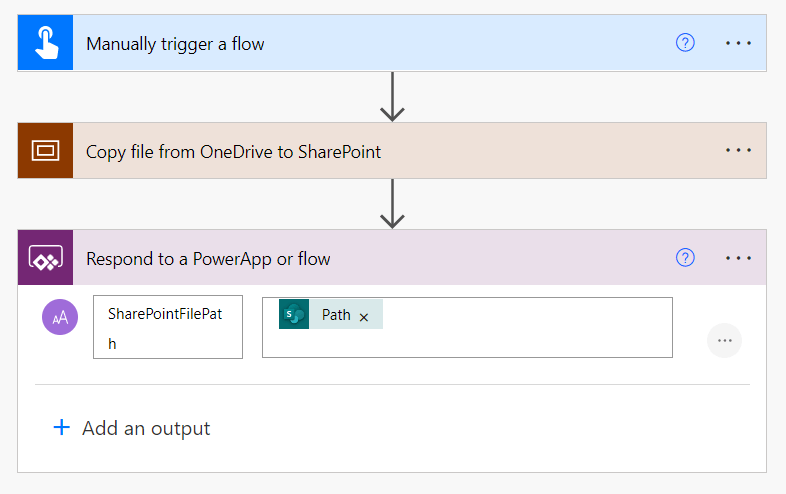

Finally, you need to add a response step to the end of the flow ('Respond to PowerApp or flow' action). Here you can also optionally add any outputs (that will be able to be accessed from the calling parent flow):

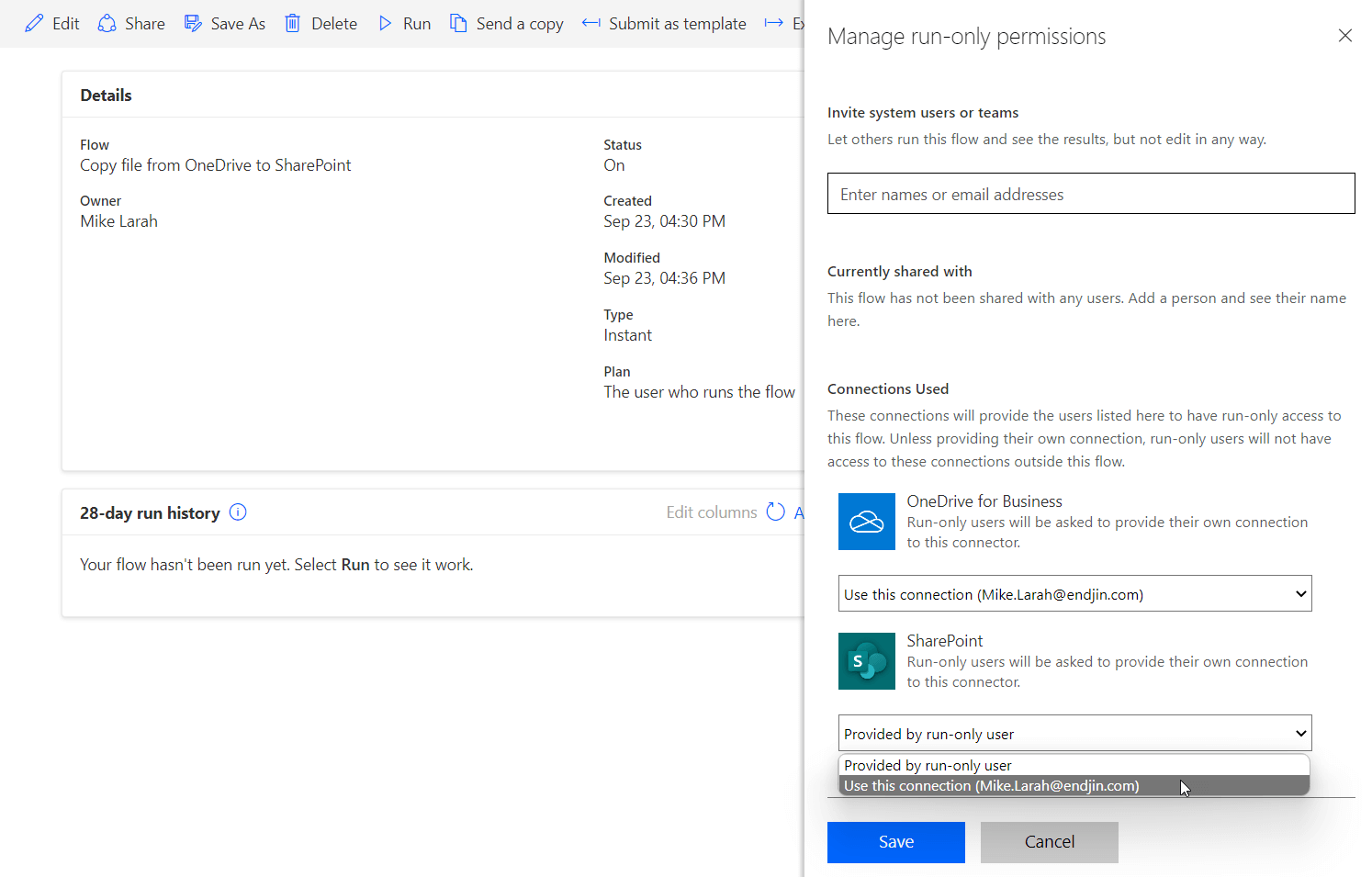

If you are using any connections in the flow, you need to update the flow to use the embedded connections. From the child flow's summary page, select 'Edit' in the 'Run only users' section, and switch all connections from 'Provided by run-only user' to 'Use this connection' and save:

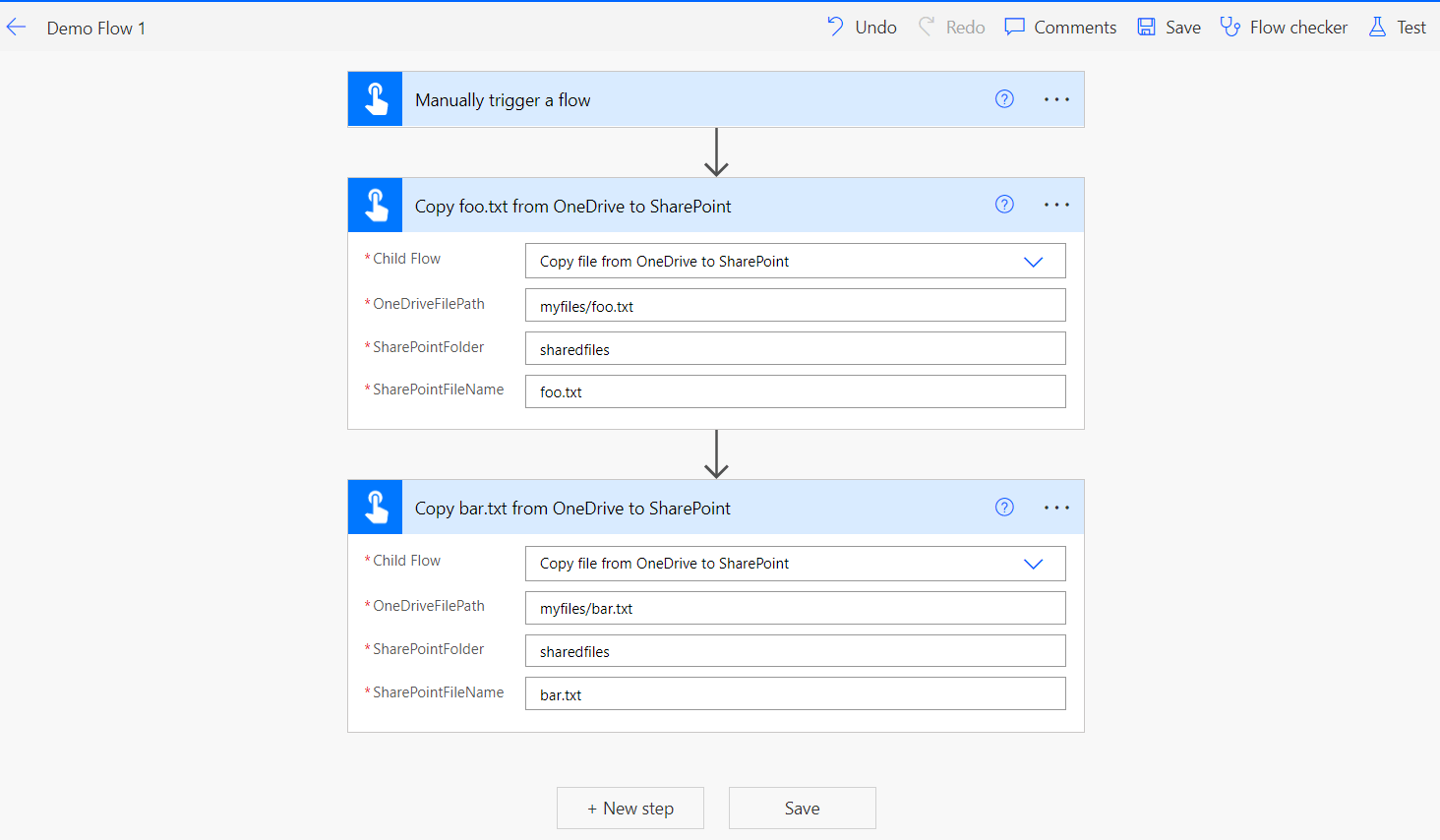

You can now reference this child flow from other parent flows in the same solution using the 'Run a Child Flow' action:

5. Keep environments separate

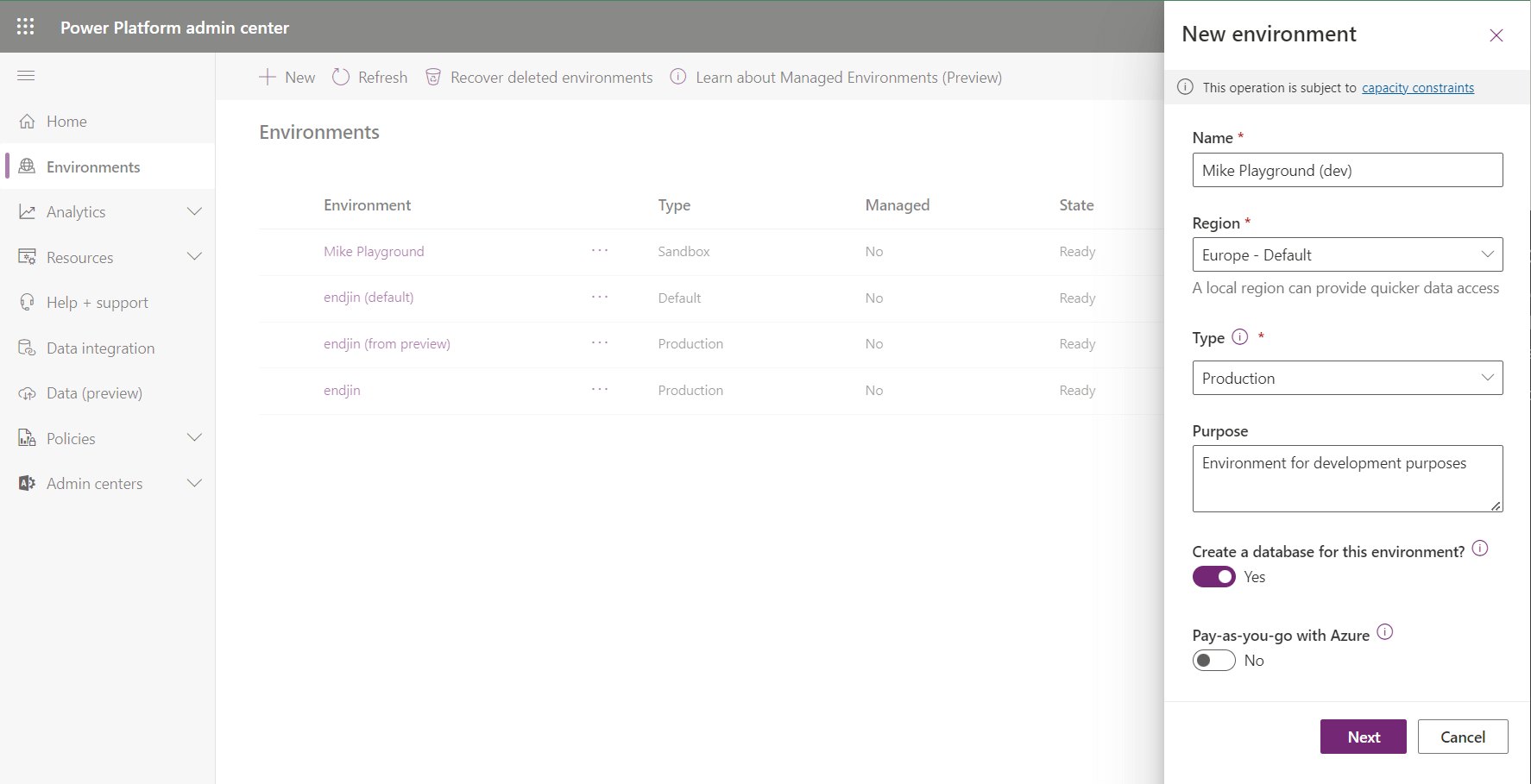

It's good practice to setup multiple environments for your flows. At a minimum, it is recommended to have a development and a production environment; this allows you to have an environment where flows can be developed without impacting on the flows running production (which may be business-critical). You could also add additional environments as necessary to your needs (e.g. if you are following a DTAP pattern).

Environments can be created in Power Automate through the Power Platform Admin Center.

Once you have multiple environments set up, if you are using solutions you can setup ALM processes to move the solution between environments. Typically, you would use an unmanaged solution in your development environment which you can then version, export, and import into your other environments as a managed solution (see Managed and Unmanaged Solutions for more details on the managed vs. unmanaged). As mentioned in Always use solutions, your ALM processes could either be manually implemented through the portal or automated via CI/CD pipelines.

You may be tempted to use a single environment and export/import your solution within that same environment (using different solution names). However, this does not work well for multiple reasons: it's not simple to rename the solution when importing, and you cannot created an unmanaged version of a managed solution in the same environment. Given the simplicity of setting up environments, we recommend always using separate environments for your solutions.