Avoiding deployment locking errors by running Web and Functions Apps from packages

We've had ongoing issues when deploying web and functions apps involving the locking of DLLs during the deployment. The specific case I'm going to talk about focuses on Azure Functions, but you can also run Web Apps from a package (though the Azure Pipelines tooling currently only works for functions, so you would need to manually upload the package). The issues I'm focusing on all started when we introduced a timer trigger into one of our functions. This meant that if you tried to do a deployment when the function was about to run/was running/had just run, then some or all of the dlls were locked, so could not be updated and the deployment would fail. The suggested fix for this was to switch over to the new "deploy from package" option when deploying the functions. This fixes the file locking problem because instead of deploying the DLLs, the function will run from a package file added to its directory.

To run from a package, you set WEBSITE_RUN_FROM_PACKAGE in the functions/web app's appsettings. If you set this to 1, it will run from a readonly zip file in your d:\home\data\SitePackages folder in the site directory. This folder must also contain a file called packagename.txt, which contains nothing but the name of the functions package to use. Alternatively, you can place the package into blob storage and instead set WEBSITE_RUN_FROM_PACKAGE to the location of that blob. You would need to set this all up manually if you are running a web app, but for Functions this can all be set up for you via build and release pipelines.

In this blog I will run through the process of updating from the web deploy process to the "deploy from package" option. So, to automate this:

Updates to the build pipeline

So originally our build pipeline looked like this:

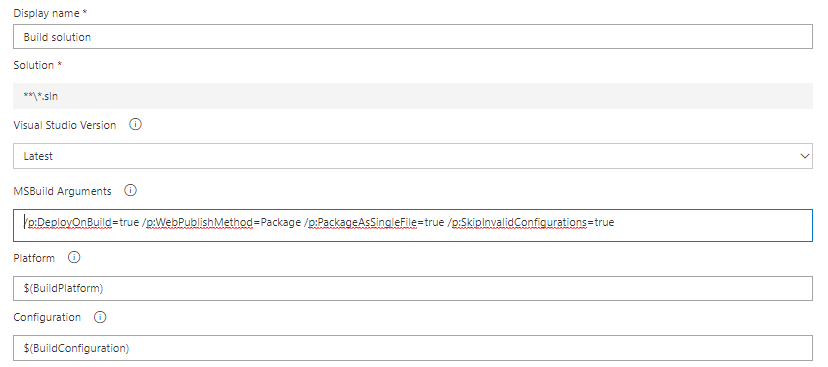

With the build step looking like this:

This takes the build output and puts it into the build's artefact staging directory. The publish functions step then looked like this:

An MS build package is created using the functions project from the build output. The artefact name is set to FunctionApp, this mans that the published functions package will be placed into the "_BUILDNAME/FunctionApp" folder (where BUILDNAME is the name of the build pipeline) in the "DefaultWorkingDirectory" of any release pipelines that download the build artefact.

In a release pipeline which has downloaded the build artefact, the MS build package will be at the location:

"$(System.DefaultWorkingDirectory)/_BUILDNAME/FunctionApp/FUNCTIONAPPNAME.zip"

Where the zip file's name corresponds to the name of the functions app project in the original build output.

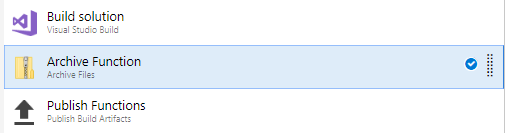

However, the deploy from package method cannot run from an MS build package. It must be a deployment ready version of the package, so a step needed to be added into the build:

This adds an archived package ("Function.zip"), ready for deployment, into the artefact staging directory. If you are just deploying a Functions App (so there is nothing that needs the Web Deploy package to be created as part of the build) you can also remove the MS build arguments included in the build step. This is because the functions deployment zip is created directly from the functions project build output.

$(Build.ArtifactStagingDirectory)/BUILDNAME/Function.zip

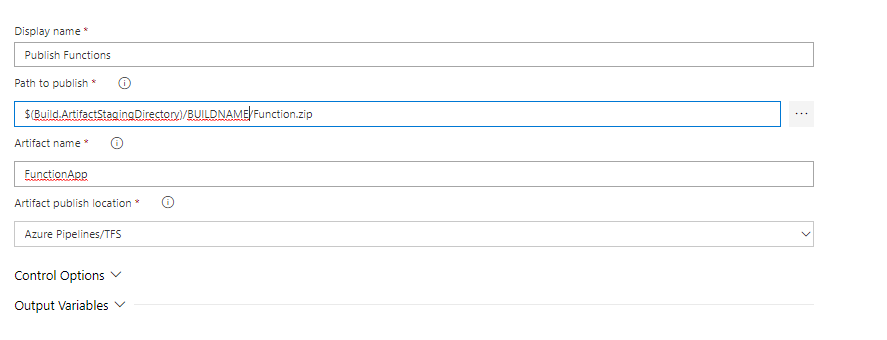

The publish functions task is then updated to instead publish the archived package:

This file has then been published (as the MS build package was before), from the new file location. When published the package will have the name Function.zip instead of the project name, but it will be added to the same location as before.

So, the release will then use the path

"$(System.DefaultWorkingDirectory)/_BUILDNAME/FunctionApp/Function.zip

to retrieve the functions package.

(The exciting thing here is that you could version this with the build ID, or create different zip files depending on whether your build was release/dev/test, but for simplicity let's just use the one package)

Updates to the release pipeline

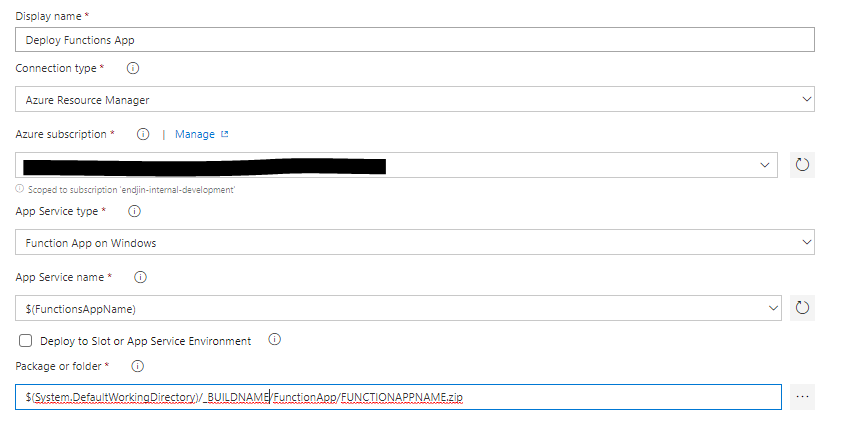

The functions app deployment in the release pipeline is shown here:

This is an Azure App Service deployment. Originally it looked like this:

Here the package to deploy is the MS build package. This was updated to our archived package file:

"$(System.DefaultWorkingDirectory)/_BUILDNAME/FunctionApp/Function.zip

And the "deploy as package option" was selected.

Then, when the release runs the deployment package is added as a readonly file to the data folder of the functions app. The WEBSITE_RUN_FROM_PACKAGE = 1 setting and the packagename.txt file are automatically added for you. Then, the functions app just runs from the readonly package in its site directory. After all this, when the function runs, it takes the deployed zip file and unzips it to make copies of the DLLs. It then no longer needs access to the zip file in order to run so it will not be locked if a deployment is attempted while the function is running.

Now, I've shown how to deploy using the Azure DevOps interface, but we've recently made the switch over to defining out builds using YAML (This is great because it means you define the build as part of the solution, and updates are all included in source control etc). So, if you're defining your builds that way:

Our original build looked like this:

- task: VSBuild@1

inputs:

solution: '$(solution)'

msbuildArgs: '/p:DeployOnBuild=true /p:WebPublishMethod=Package /p:PackageAsSingleFile=true /p:SkipInvalidConfigurations=true'

platform: '$(buildPlatform)'

configuration: '$(buildConfiguration)'

- task: PublishBuildArtifacts@1

displayName: 'Publish Artifact: Function App'

inputs:

PathtoPublish: 'Solutions/FUNCTIONAPPNAME/bin/Release/netcoreapp2.1/'

ArtifactName: FunctionApp

And once updated it looked like this:

- task: VSBuild@1

inputs:

solution: '$(solution)'

platform: '$(buildPlatform)'

configuration: '$(buildConfiguration)'

- task: ArchiveFiles@2

displayName: 'Archive Function'

inputs:

rootFolderOrFile: 'Solutions/FUNCTIONAPPNAME/bin/Release/netcoreapp2.1/'

includeRootFolder: false

archiveFile: '$(Build.ArtifactStagingDirectory)/BUILDNAME/Function.zip'

- task: PublishBuildArtifacts@1

displayName: 'Publish Artifact: Function App'

inputs:

PathtoPublish: '$(Build.ArtifactStagingDirectory)/BUILDNAME/Function.zip'

ArtifactName: FunctionApp

(Notice that the build arguments have now been removed)