Power BI Dataflow refresh polling

If you're a frequent user of the Power BI REST API and Power BI Dataflows, you may have come across the problem that there's seemingly no programmatic way to get the refresh history of a Dataflow. This is unlike Datasets - there is an endpoint for that.

The ability to know the status of a refresh operation is useful when you're performing automated operations, and you need to know that something has succeeded or failed before deciding what to do next. For example, a desired feature in the Power BI Service is to be able to refresh a dataflow, and automatically refresh a dataset that depends on that dataflow. Without a refresh history endpoint, this is made more complicated than necessary.

You could just schedule a dataset refresh to occur 30 minutes after a dataflow refresh, but if that dataflow refresh fails for any reason, or hasn't finished refreshing, then you'll run into problems.

Alternatively, there's a nice workaround written by Olivier Travers to have a Logic App/Power Automate workflow monitoring an inbox to which Dataflow refresh notifications are configured, but with this method one has to add more moving parts to one's solution, and there'd be a reliance on notification emails being sent successfully and in a timely manner upon refresh completion.

It turns out it is possible to programmatically retrieve a Dataflow's refresh history, enabling you to determine a refresh operation's current refresh status. This can serve as a polling endpoint, so you can determine when to perform subsequent operations based on the current refresh status.

The technique for getting the refresh history for a Dataflow outlined in this blog is not ideal, but it works (at least, as of March 2020). All you need is:

- An AAD bearer token for Power BI (you can get one of these numerous ways - probably the easiest is using the Power BI PowerShell Get-PowerBIAccessToken cmdlet)

- The base url of the API the UI uses to make requests - this differs based on the region of your Power BI tenant. I've highlighted this in one of the screenshots below.

Currently, when you trigger a Dataflow refresh, you get a 200 code and an empty response. This 200 response code doesn't mean that the underlying refresh operation has succeeded - merely that the request has succeeded. This response code is correct - the problem is that there is no way of tracking how the underlying operation is performing.

As mentioned, unlike Datasets, there's no "official" endpoint in the REST API to get the refresh history, but the fact that it's possible to view the refresh history in the UI made me wonder - can I get my hands on the data that populates that dialogue box?

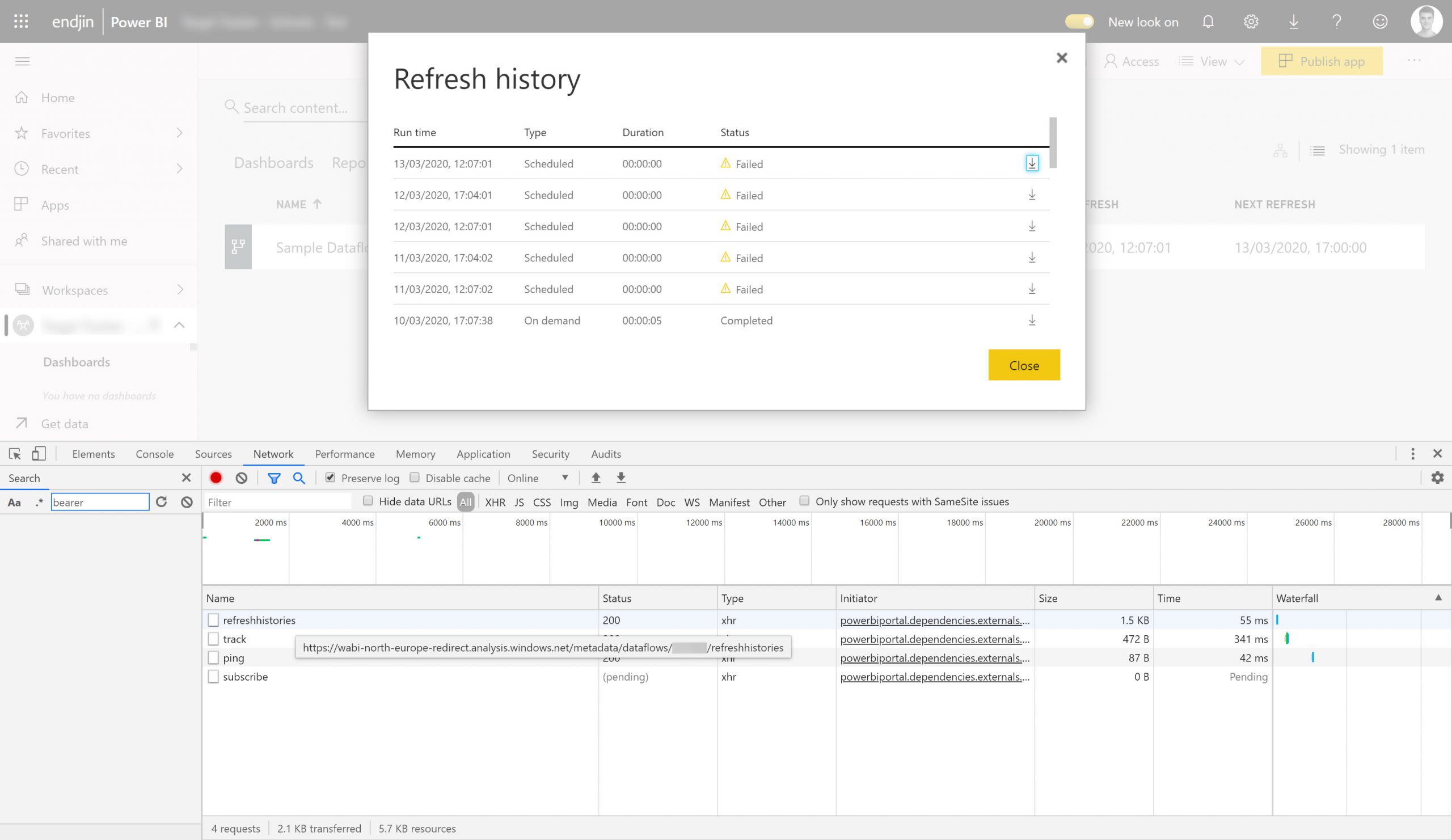

If we enter the dev tools in the browser (I'm using Chrome), then navigate to the Dataflow refresh history, we can see the request that's made to retrieve the history:

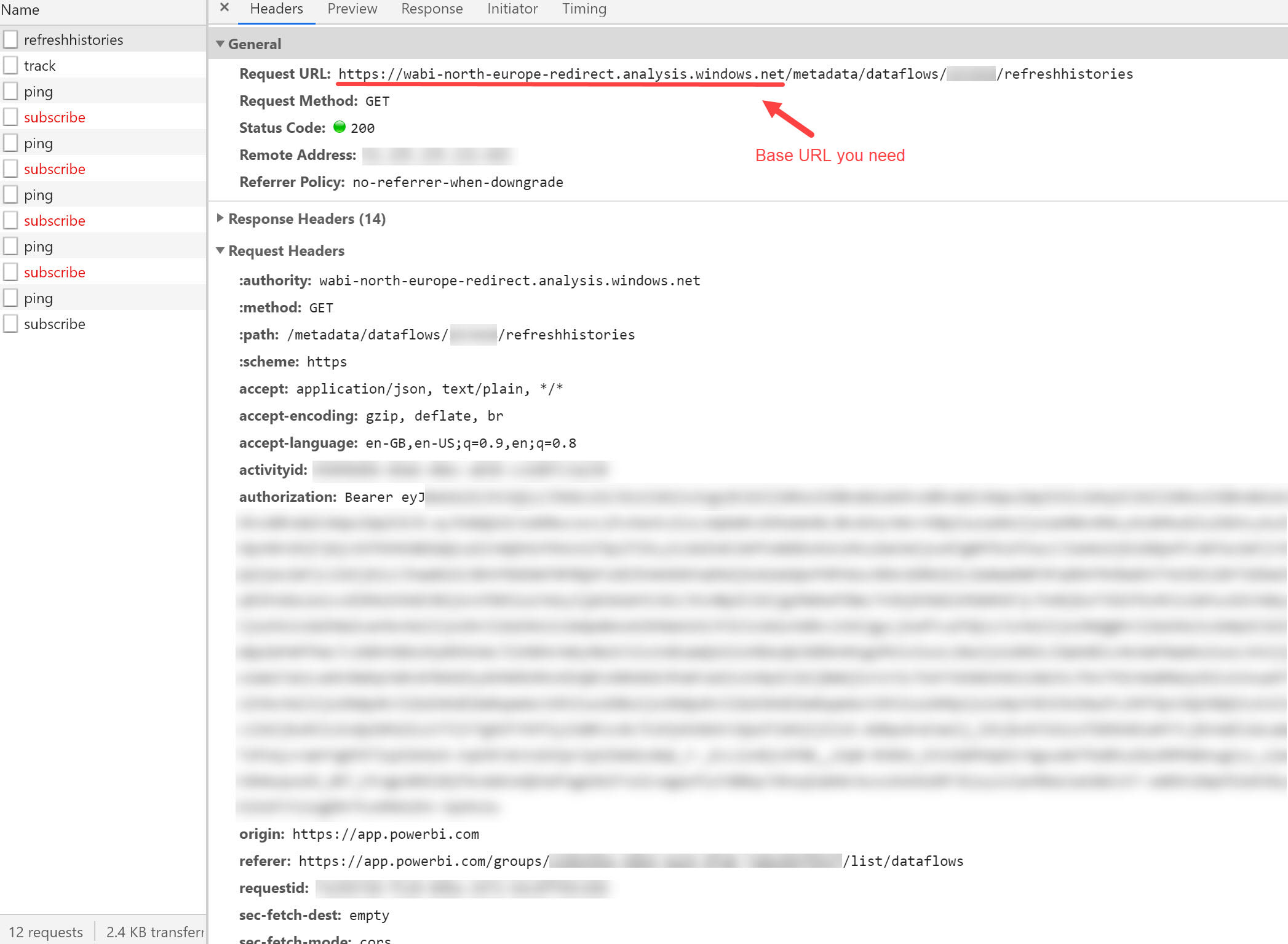

Drilling into that request, we can see this:

The thing I've blurred out in the Request URL is an ID of some sort identifying my Dataflow. This isn't the Dataflow ID that you can see in the url when navigating to a Dataflow (as you can probably tell by its length) - it's 'some other ID'. I've also pointed out the baseUrl for my tenant - yours will be different if your tenant is in a different location - be sure to check. Anyway, here we're making a request to https://<baseUrlForYourRegion>/metadata/dataflows/<someSortOfId>/refreshhistories, and the response looks like this:

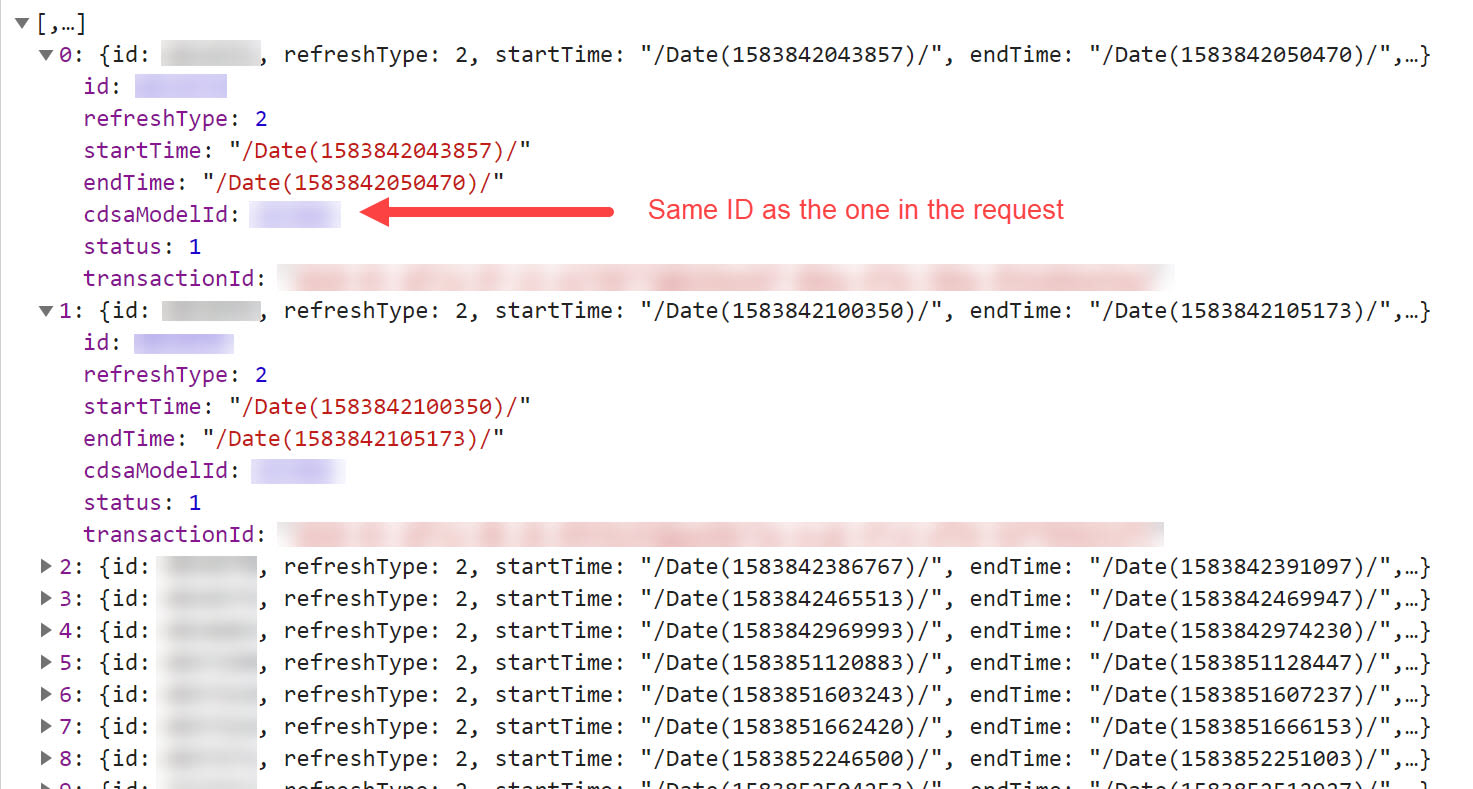

As shown in the screenshot, the cdsaModelId is the same as the ID that forms part of the request URL. Also shown in the response is a status attribute. As you've probably guessed, this is the status of our refresh, represented as an int (which is probably an enum behind the scenes). After a bit of investigation, we found the mapping to be something along the lines of:

- 1: Completed successfully

- 2: Failed (Incorrect credentials/Dataflow schema issues/internal error)

- 4: In progress

- 5: ? (Think it's something to do with a now-deleted-Dataflow that used to be on a V2 workspace which belonged to a shared capacity... Maybe?)

- 8: Failed (Workspace assigned to capacity but capacity switched off)

Note: in brackets are the errors we've seen that have resulted in that particular status code. They're also the only codes that we've seen so far - it would be fair to assume that, given the gaps in the range of numbers above, there almost certainly are more.

I tried making the same request as above in Postman, using the same bearer token. Everything worked fine.

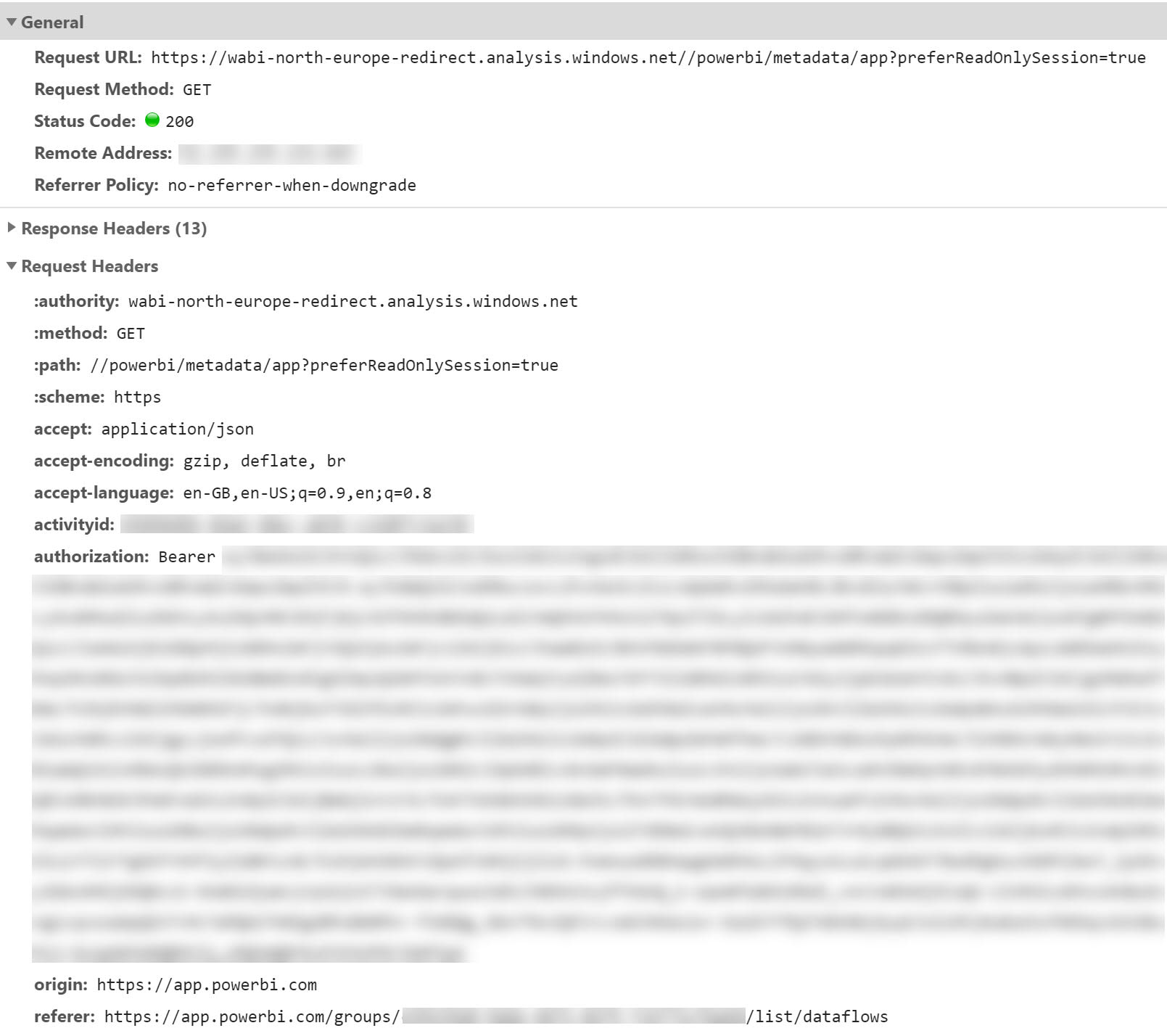

I bet you're now thinking "That's great, but where can I get that cdsaModelId?" I had the same thought. As it transpires, if you hit refresh on your page whilst in the Power BI Service, the browser will make a request that looks like this:

As you can see, the endpoint https://<baseUrlForYourRegion>//powerbi/metadata/app?preferReadOnlySession=true doesn't have any notion of the workspace you're currently in. From what I can tell, the request seems to return lots of details about all the artifacts in all the (V2?) workspaces that you have access to in your tenant.

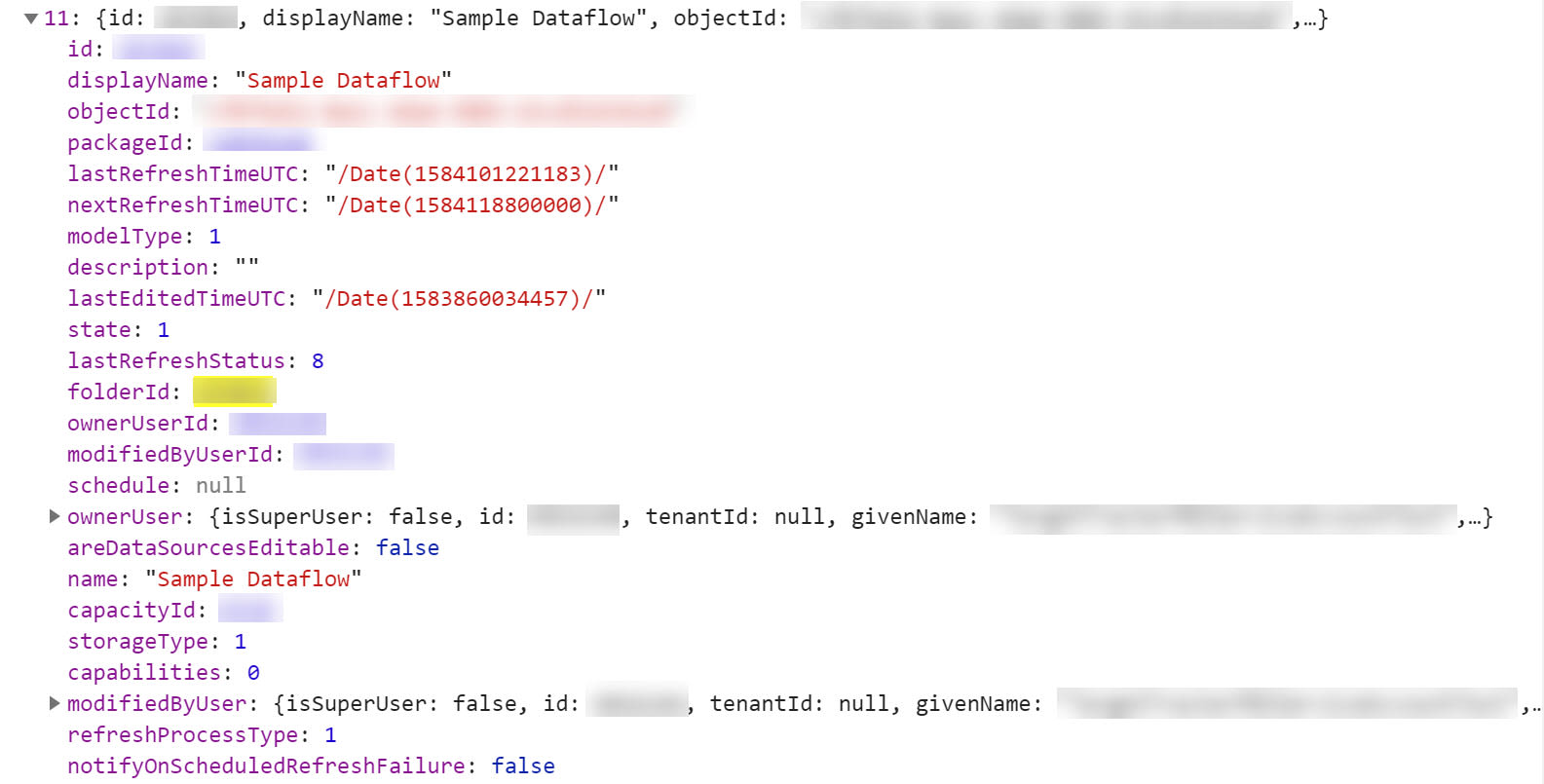

As you can imagine, the response payload is therefore quite large. It does contain what we need, though. There's an attribute called cdsaModels which seems to list out the dataflows that live in V2 workspaces that you have access to. I think it may also list out some deleted dataflows in those same workspaces (but that might be wrong). Anyway, the object we're after looks like this:

The id is the cdsaModelId we saw at the start of the blog. The displayName is the name of your Dataflow. More crucially, the objectId is the ID of your Dataflow that you see in the url when you navigate to your Dataflow (i.e. the ID that you use for interacting with a specific dataflow in the Dataflows REST API).

This means that as long as you have the object ID of your Dataflow, you can hit this endpoint, extract the cdsaModels attribute, and easily locate your target Dataflow.

If you have a keen eye, you may have noticed that the above object actually includes a lastRefreshStatus attribute. This means that we don't even need the first endpoint we spoke about earlier. However, this endpoint's response is much larger - if you're wanting to poll frequently (every couple of seconds), you may want to hit this endpoint first, grab the cdsaModelId, and then poll the first endpoint we discussed.

And that's it!

Hacky, I know. But this solution to get the refresh history is better than no solution at all! Hopefully the Power BI team will add a "Get Refresh History In Group" endpoint to the Dataflows API in the near future, but for now this method should serve your needs.