Integration Testing Azure Functions with Reqnroll and C#, Part 2 - Using step bindings to start Functions

TL;DR - This series of posts shows how you can integration test Azure Functions projects using the open-source Corvus.Testing.AzureFunctions.ReqnRoll library and walks through the different ways you can use it in your Reqnroll projects to start and stop function app instances for your scenarios and features.

In the first post in this series, we introduced the Corvus.Testing.AzureFunctions.ReqnRoll library. In this post, we're going to take a look at the simplest way of using it to start functions apps for testing purposes, which is to use the provided step bindings.

This is demonstrated in ScenariosUsingStepBindings.feature in the Corvus.Testing.AzureFunctions.ReqnRoll.Demo.Specs project.

The Corvus.Testing.AzureFunctions.ReqnRoll project contains a Given step definition for the following pattern:

[Given("I start a functions instance for the local project '(.*)' on port (.*) with runtime '(.*)'")]

If you include steps that match this pattern in your scenario, they will cause the functions defined in the specified project to be run, with HTTP functions listening on the specified port. If your function doesn't actually have any HTTP endpoints you can supply a dummy value for the port. Runtime will most likely be net8.0 (for Functions v4), though net9.0 and net10.0 are also options depending on your target framework.

The project to run is currently resolved by traversing up the folder tree until it finds a folder that, when combined with the function name, runtime and build folder, provides a valid path.

As well as bindings for these steps, there's an additional AfterScenario hook that goes with them to tear down the functions instances they start. You can start multiple functions in a single scenario using these bindings if necessary.

Viewing function output

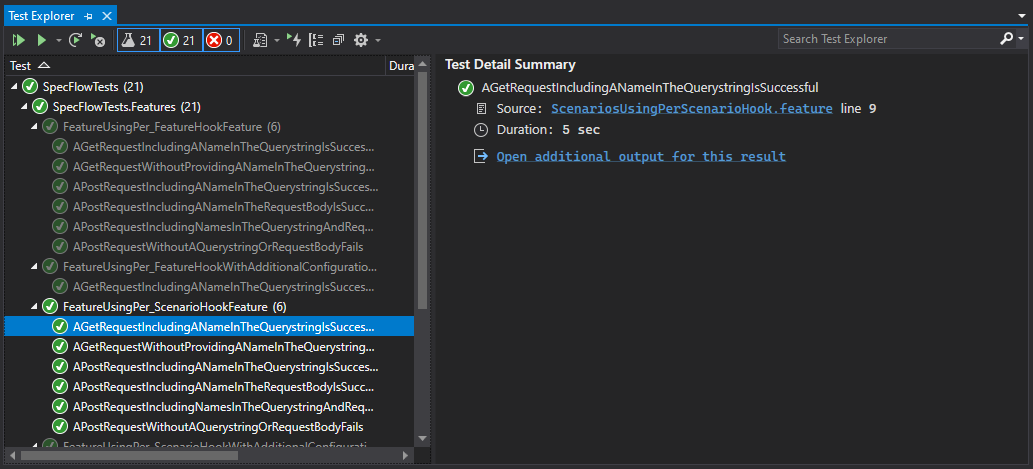

Once the test run is complete, output from the functions app can be seen in the Test Detail Summary. In Visual Studio, this is visible in the Test Explorer by selecting the scenario that's been executed and clicking the scenario that's been selected:

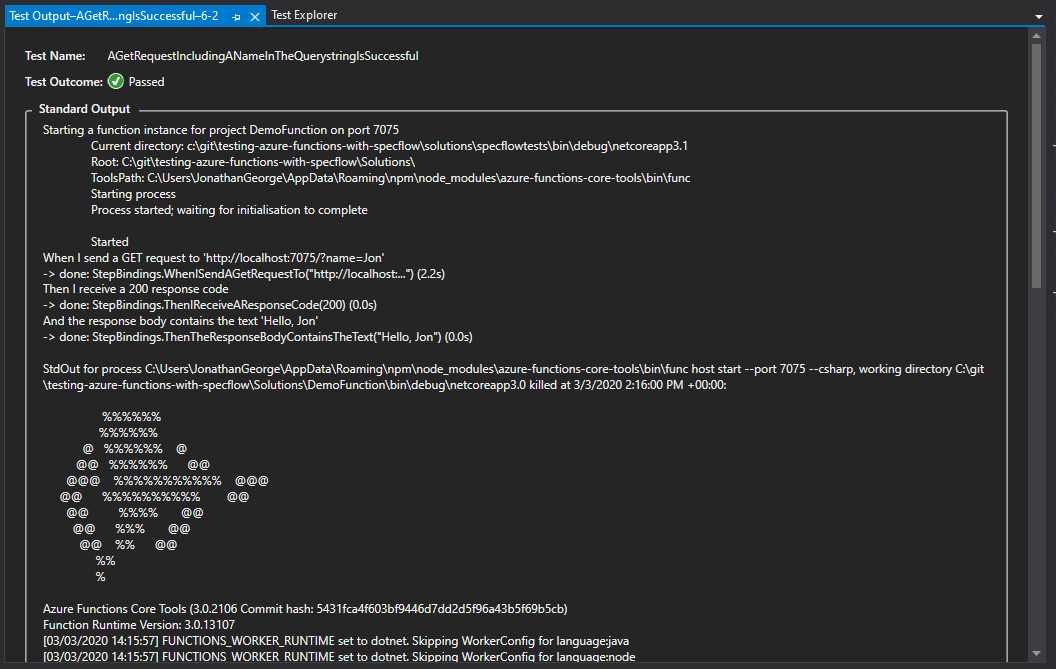

Clicking the link "Open additional output for this result" will show Reqnroll's standard output capture:

As you can see from the screenshot above, this starts with the output from the BeforeScenario binding showing the solution and runtime location. If starting the function failed for some reason, you'd most likely see the reason here.

This is followed by Reqnroll's standard per-step output. Finally the output from the AfterScenario binding is shown, which is where the StdOut and StdErr for each function is added.

Note that the log shown in this window is frequently a truncated version of the whole. If this is the case, you'll see a message explaining how to access the full log by copying and pasting into another tool.

Advantages to this method

Using step bindings in this way makes it crystal clear to the developer what's going on as part of their spec. You can easily see which functions are being run and on what ports.

Disadvantages to this method

Whilst it's nice for developers to see exactly what technical setup is taking place, this does go against the goals of Behaviour Driven Development. Specifically, we should be striving to make the feature readable in the end user's language. When testing an API using a BDD spec, you can make a case that the end user whose language we should be using is a technical one - the consumers of APIs are most likely to be developers - but even so, this is an overly technical step to include in your scenarios.

In the next post, I'll show how this problem can be addressed using Reqnroll hooks to start and stop functions apps.